Back to Journals » Advances in Medical Education and Practice » Volume 9

Simulation training in palliative care: state of the art and future directions

Authors Kozhevnikov D, Morrison LJ, Ellman MS

Received 1 August 2018

Accepted for publication 26 October 2018

Published 7 December 2018 Volume 2018:9 Pages 915—924

DOI https://doi.org/10.2147/AMEP.S153630

Checked for plagiarism Yes

Review by Single anonymous peer review

Peer reviewer comments 3

Editor who approved publication: Dr Md Anwarul Azim Majumder

Video abstract presented by Dmitry Kozhevnikov.

Views: 1233

Dmitry Kozhevnikov,1 Laura J Morrison,1 Matthew S Ellman2

1Department of Medicine, Yale Palliative Care Program, Yale School of Medicine, New Haven, CT, USA; 2Department of Medicine, Yale School of Medicine, New Haven, CT, USA

Background: The growing need for palliative care (PC) among patients with serious illness is outstripped by the short supply of PC specialists. This mismatch calls for competency of all health care providers in primary PC, including patient-centered communication, management of pain and other symptoms, and interprofessional teamwork. Simulation-based medical education (SBME) has emerged as a promising modality to teach key skills and close the educational gap. This paper describes the current state of SBME in training of PC skills.

Methods: We conducted a systematic review of the literature reporting on simulation experiences addressing PC skills for clinical learners in medicine and nursing. We collected data on learner characteristics, the method and content of the simulation, and outcome assessments.

Results: In a total of 78 studies, 76% involved learners from medicine and 38% involved learners from nursing, while social work (6%) and spiritual care (3%) learners were significantly underrepresented. Only 16% of studies involved collaboration between participants at different training levels. The standardized patient encounter was the most popular simulation method, accounting for 68% of all studies. Eliciting treatment preferences (50%), delivering bad news (41%), and providing empathic communication (40%) were the most commonly addressed skills, while symptom management was only addressed in 13% of studies. The most common method of simulation evaluation was subjective participant feedback (62%). Only 4% of studies examined patient outcomes. In 22% of studies, simulation outcomes were not measured at all.

Discussion: We describe the current state of SBME in PC education, highlighting advances over recent decades and identifying gaps and opportunities for future directions. We recommend designing SBME for a broader range of learners and for interprofessional skill building. We advocate for expansion of skill content, especially symptom management education. Finally, evaluation of SBME in PC training should be more rigorous with a shift to include more patient outcomes.

Keywords: simulation training, palliative care, palliative medicine, standardized patient, structured clinical examination, medical education

Background

Palliative care (PC) is a relatively young medical specialty, officially recognized as a specialty in the UK in 1987 and by the American Board of Medical Specialties in 2006.1,2 PC is defined by the World Health Organization as an “approach that improves the quality of life of patients and their families facing life threatening illness, through prevention and relief of suffering by means of early identification and impeccable assessment and treatment of pain and other problems, physical, psychosocial, and spiritual”.3 In contrast with hospice care, PC can be delivered at any stage of a serious illness and can be provided concurrently with curative or disease-modifying treatment.4 Although there is a growing need for PC among patients living with serious and life-limiting illness, there is a shortage of PC specialists with a projected absolute growth of only 1% in PC specialists in the next 20 years.5 Experts have called for a multi-faceted approach to this issue, with recommendations to increase graduate medical education funding for PC trainees6 and to educate all health professionals in primary PC skills, the basic skills that all clinicians who care for patients with serious illnesses should possess.7 In a survey of both PC specialists and non-PC specialists, the three areas of greatest importance identified for primary PC competencies were managing psychological symptoms, addressing prognosis, and managing the final hours and days of life.8

As in medical education in general, a variety of teaching methods are used in PC training. These include didactic lectures, small group workshops, self-directed paper or online learning modules, and elective or required coursework with a PC clinical team.9,10 Simulation-based medical education (SBME) has been adopted as an additional modality to deliver training in skills across multiple specialties and levels of training. The term simulation has been defined as a technique, rather than as a technology, to replace or amplify real experiences with guided experiences that evoke or replicate substantial aspects of the real world in a fully interactive manner.11 Not only has it been found to be effective in the acquisition of certain procedural clinical skills, eg, intravenous catheter placement, endotracheal intubation, and cardiopulmonary resuscitation,12 but SBME has also been used as a tool to teach PC clinical skills.

SBME: historical context

Simulation as a learning technique dates back to eighteenth century France, when Madame Du Coudray used a leather mannequin pelvis and fetal model with placenta to train midwives.13 The medical education use of actors to portray or simulate patients and clinical situations was first reported in the clinical neurology literature in 1964.14 The benefits of the standardized patient (SP) as a teaching tool were additionally demonstrated in 1968 by gynecology teaching assistants who were instrumental in teaching pelvic examination techniques in a safe environment.15 The objective structured clinical examination (OSCE) was described in 1975 by Harden et al16 as a tool in which the variables and complexity of the examination are more easily controlled, with aims that are more clearly defined. The OSCE became integrated into routine evaluation of US medical students and was eventually incorporated into the Step II Clinical Skills examination in 2004 as part of the United States Medical Licensing Examination.17 SBME experienced a monumental turning point when the first full-scale mannequin simulator for anesthesia training, Sim One, was created at the University of Southern California in 1966. It could blink, change pupil size, and open its jaw to allow for practicing endotracheal intubation.18

One of the first mentions in the literature of simulators used in education of PC skills was by Ann Faulkner, a medical educator in the UK. In 1994, she advocated that simulation provides a unique opportunity to practice communication skills by immersing learners in a safe and constructive environment.19 In the almost 25 years since Faulkner’s report, the use of SBME in PC education has expanded to address diverse skill development among many different learners in a variety of educational settings.

This review seeks to describe the current state of the art of SBME in PC education through a comprehensive and systematic analysis of the published literature. We explore the scope of SBME methods used, the PC skills taught, and the range of learners targeted. We also aimed to describe the quality of outcome assessments reported and identify important gaps in SBME in PC education and opportunities for future applications.

Methods

Literature search and sample selection

We conducted a literature search to identify publications reporting on a simulation training experience to teach PC skills. The review process took place in three steps: an initial database search, the application of inclusion and exclusion criteria, and the final selection based on relevance of the study content.

For the initial search, we identified two key concepts relevant to our topic: PC and simulation. We then identified appropriate controlled vocabulary terms through PubMed’s Medical Subject Headings (MeSH) database and Embase’s Emtree database available through the Ovid platform. The search was then performed in Embase, PubMed, and Web of Science using appropriate controlled vocabulary and syntax for each database.

Keywords identified were standardized patient, simulation training, interactive learning, simulation, structured clinical examination, OSCE, hospice, palliative medicine, supportive care, and palliative care.

MeSH terms identified were simulation training, palliative medicine, palliative care, and palliative care nursing. Emtree terms identified were simulation training and palliative therapy.

Our main inclusion criteria were 1) articles written in English and 2) articles that involved the implementation of a simulation-based exercise for the purposes of teaching PC skills. We considered simulation-based exercises to include those involving an SP actor, a role-play scenario, a computer-based learning experience, or an experience using a technologically advanced robotic simulator.

The PRISMA flow diagram in Figure 1 describes the process by which we identified and screened articles for this review. Our initial search yielded a total of 427 articles. One author (DK) screened all titles for irrelevant keywords indicative of article content inconsistent with the goals of our search. For example, articles that were primarily about basic science research, medical oncology treatment, or radiation oncology treatment simulation were excluded. A total of 282 articles were removed during this step, leaving 145 articles for further review.

| Figure 1 PRISMA flow diagram illustrating the selection process of articles. |

We then excluded another 67 articles that were either case reports, editorials, meeting abstracts not published in peer-reviewed journals, or literature reviews. We also excluded studies that involved solely nonclinical learners, unless they were part of an interprofessional experience with medical or nursing learners. Once inclusion and exclusion criteria were applied, our sample consisted of a final total of 78 articles (Appendix 1). We created a Microsoft Excel spreadsheet with the final study sample to facilitate the recording of coded variables.

Coding and analysis

We created two main categories of variables: learner characteristics and simulation characteristics. Within each, we identified variables we believed most relevant and codable to describe key aspects about the learners, types of simulation methods used, and educational content and assessment. In the first category, we coded learner profession, trainee level, and specialty/subspecialty. In the second category, we coded the simulation type, skill focus, presence or absence of debrief, and simulation assessment.

Two authors (DK and MSE) created a test sample of 20 randomly selected studies out of the 78 to independently review using the coding scheme for the variables described earlier. The purposes of the test sample were to ensure that our coding scheme was feasible and demonstrate agreement in coding prior to coding the entire sample. During the coding of the test sample, we identified two variables with low coding inter-rater reliability. The first was the category of fidelity. Although some studies described their simulation as of either low or high fidelity, most did not. We determined it was difficult to create an accurate and standardized way to code fidelity. For example, one reviewer could code an SP experience as of high fidelity, while another reviewer may only code experiences that utilized robotic mannequins as of high fidelity. Given the inexactness of an operational definition of fidelity, we decided that the level of fidelity could not be reliably coded and it was therefore removed. Initially, we were also interested in whether a reported simulation experience was designed to be formative or evaluative. However, it became apparent in our test sample that we could not reliably classify many studies as formative or evaluative as the intent of the authors was either not specified or unclear.

In looking at the characteristics of targeted learners, we first identified the codes for profession as medicine, nursing, social work, or chaplaincy. For the trainee-level variable, we created five possible codes: student, resident, fellow, practicing provider, and unspecified. We defined practicing provider as a licensed practitioner practicing independently and included physicians, advanced practice nurses, physician assistants, and registered nurses. Next, for the specialty variable, we tabulated the specialties and subspecialties of the practicing providers. We also tabulated the intended specialty of practice for residents and fellows. Student-level learners were not coded for this variable as they have not started a specialty path. This variable was coded as the data collection proceeded, with a total of six different specialties for physicians and nurses noted. Five subspecialties of medicine and five subspecialties of pediatrics were also coded.

For simulation type, the possible values included SP encounter, role play/sociodrama, simulation laboratory, and computer-based exercise. The skill focus variable had six possible codes: delivering bad news, empathic communication, eliciting treatment preferences, symptom management, team communication, and others. Presence or absence of debrief was coded as either yes or no. Our last variable, simulation assessment, evaluated if and how simulation experiences were assessed for educational impact and efficacy. Based on our familiarity with the literature, we created four possible codes for this variable: participant feedback, post-simulation OSCE, patient outcomes, and not assessed. Participant feedback was coded if a study collected data from the participants before and after simulation in various areas. These included ratings of self-efficacy regarding performance applying certain PC skills, comfort with topics of serious illness and end-of-life care, attitudes toward these topics, and knowledge of palliative or end-of-life clinical care. This code was also chosen if a study surveyed participants on whether the simulation met its stated learning goals.

After reaching consensus on coding each variable in the test sample, we assembled the final coding scheme. Each study was independently read and coded by one investigator (DK or MSE) using a numerical coding scheme in Microsoft Excel. Each qualitative code was assigned a number. Once all the studies were coded, columns in the Microsoft Excel spreadsheet were organized by numerical order. Basic statistics were calculated by tabulating frequencies within each variable. Of note, given the variety of methods used in the studies we reviewed, there were multiple variables that could be assigned more than one code. For example, if a study involved physicians, nursing participants, and social work participants, three codes were assigned for the profession variable.

Results

Learner characteristics are shown in Table 1. When examining profession, 38% of studies involved either nursing students or practicing nurses. On the other hand, physician learners including practicing physicians, fellows, residents, and medical students were included in 76% of the studies reviewed. Learners from social work and spiritual care were less frequently involved, with only 6% and 3% of the reviewed articles including participants from these fields. On the medical side, specialties and subspecialties were recorded for 40 studies. Internal medicine and family medicine were combined into one group due to similarities in training and scope of practice. These learners were involved in 40% of studies. Many subspecialties of internal medicine were represented, including critical care (15%), oncology (13%), hospice and palliative medicine (13%), geriatrics (8%), and nephrology (5%). Pediatric learners comprised 20% of the non-student subgroup and involved pediatric residents and attendings. Pediatric subspecialties noted were pediatric intensive care (10%), neonatal intensive care (5%), oncology (3%), emergency medicine (EM, 3%), and cardiology (3%).

The two surgical specialties represented were general surgery (8%) and obstetrics-gynecology (5%). Lastly, EM (8%) and neurology (3%) were also noted in minority of the sample.

When examining the results for trainee level, students of both medicine and nursing were represented at the highest frequency, with almost half of the studies reviewed including students. Fellows were involved in only 18% of the studies. Only 16% of the studies reviewed integrated learners of more than one level of training, demonstrating a lack of collaborative teaching across trainee level.

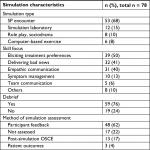

Simulation characteristics are shown in Table 2. The most common modality of SBME was the use of SPs outside of simulation laboratory settings, found in 68% of studies reviewed. Only 15% of studies utilized a technologically advanced robotic simulator. Role play and sociodrama were seen in only 10% of the studies and computer-based learning modalities were seen in only 8% of the studies.

When examining the variability among the skills being either taught or tested by simulation-based training, eliciting treatment preferences (50%), delivering bad news (41%), and empathic communication (40%) were the most common. Symptom management was addressed in only 13% of the studies, with cancer-related pain addressed in nine out of 10 of these studies. Delirium was also addressed in one study, along with pain and nausea.20 One study utilized a technologically advanced mannequin to test PC trainees in the UK on their management of massive hemorrhage, dyspnea, and opioid toxicity.21 The least commonly addressed skill included in simulation experiences was team communication, addressed in only 6% of the studies.

The most common method of simulation assessment was participant feedback (62%). There was a post-simulation OSCE to evaluate improvement in skills in 17% of studies. Only three studies evaluated patient outcomes as a measure of effectiveness of the simulation experience. In 22% of studies, there was no report of an outcome evaluation of the simulation. Lastly, a debriefing portion was included in 76% of the studies.

Discussion

Using robust search methods, we identified 78 studies meeting our inclusion/exclusion criteria and conducted a systematic review to describe the current state of SBME in PC education. We identified several key trends regarding targeted learners, simulation methods, educational content, and assessments of efficacy. These trends, along with important gaps identified, are summarized in the following paragraphs.

We found that most reports included physician learners, with fewer involving nursing. We observed that SBME has been used in training with all levels of learners ranging from students to practicing providers. Among studies with physician learners, most were in medicine and its subspecialties. We identified a paucity of reports involving learners in surgery and certain medical subspecialties, such as cardiology and hepatology, who often care for patients with serious illness and PC needs. Notably, independently practicing physicians and nurses were represented in 22% of the studies, which is encouraging as it indicates that simulation is utilized in continuing PC education. Interprofessional learning was very rare, illustrated by the infrequent integration of social work (6%) and chaplaincy (3%) trainees. Given the primacy of the interprofessional team approach to PC, much opportunity exists for targeting these groups in the future.

We identified a variety of simulation methods, with SP encounters making up a majority (68%) of simulation experiences. This may be explained by the predominance of communication skills training in SBME in PC training. SP encounters provide learners with a safe space to practice skills they may find challenging or intimidating with real patients, such as delivering bad news and eliciting goals of care.22 SPs also, as the name implies, provide a level of standardization of the educational experience and can often be tailored to the desired skill set and level of learner. However, PC curriculums that utilize SPs can be costly, with one study citing a three-station PC OSCE for 12 learners costing $6800.23 In addition to financial cost, educators may face challenges incorporating PC specialists into program development due to the known workforce shortages in the field. Although we acknowledge that the use of SPs will not replace the learning that occurs with real patients, we believe it can augment these experiences by fostering the development of skills in a safe space, with the potential for debrief and focused feedback.

Much less common (15% of identified studies) is the use of the simulation laboratory with a technologically advanced robotic simulator. We postulate several advantages to using a robotic simulator over an SP, if available. First, a simulator can mimic an array of physiologic processes with human responses that a human volunteer cannot, such as abnormal heart and lung sounds, which would be especially useful for learners in a scenario with a dying patient. This feature could also foster learning in symptom management skills. In addition, educators can design patient scenarios with greater potential for standardization by minimizing variations associated with SPs. Lastly, these simulators are useful in scenarios with pediatric patients, due to the limitations of using child SPs. Costs can be prohibitive, with one technologically advanced simulator cited to cost $75,000,24 and institutions will need to perform cost–utility analyses for such an investment.25

Regarding educational content, most studies focused on communication skills, while only 13% of the studies addressed symptom management skills. Although PC emphasizes communication skills, it also prioritizes the treatment of symptoms that negatively impact the quality of life.4 This gap in symptom management and the lack of interprofessional learning are inconsistent with published PC competencies for medical students and residents.26 These competencies include expectations that medical students would be able to “assess pain systematically”, “assess non-pain symptoms”, and “describe roles of members of an interdisciplinary team”.

A range of methods were utilized to evaluate the simulation experience. Regarding participant feedback, participants were frequently surveyed on their comfort, confidence, and perception of self-efficacy regarding the targeted skills or tasks. Most studies reported comparisons of pre- and post-simulation survey data. Several studies used participants’ self-efficacy ratings alone,23,27 while others combined self-efficacy ratings with a performance-based evaluation, such as an OSCE28 or an online performance with a virtual patient.29 The Kirkpatrick model of training ranks educational outcomes from Levels 1 to 4: Level 1 is “Did the learner perceive value?”, Level 2 is “Did the learner’s knowledge or skill improve?”, Level 3 is “Did the knowledge or skill transfer to behavior”, and Level 4 is “Did the training program lead to improved patient outcomes?”.30 We identified that a majority (62%) of assessments were based on ratings from participants, indicating Kirkpatrick Level 1 or 2 outcomes. Seventeen percent of studies assessed efficacy with a post-simulation OSCE, consistent with Kirkpatrick Level 3. Only three studies assessed patient outcomes consistent with Kirkpatrick Level 4. In acknowledging the challenge of assessing these rigorous outcomes, we describe these three studies here.

Trickey et al implemented an SBME with SPs to improve surgical residents’ patient-centered communication skills. The Communication Assessment Tool (CAT) was used during scenarios involving delivery of bad news to a caregiver of a patient with postoperative intracerebral hemorrhage and a patient with cholelithiasis with contraindications for cholecystectomy. In the outcome assessment, actual surgical patients reported that the participating surgeons provided explanations with improved clarity, as surveyed in the Hospital Consumer Assessment of Healthcare Providers and Systems (HCAPS).31 Kruser et al utilized SPs to teach attending surgeons “Best case/Worst case” phrasing for high-risk surgical discussions with elderly patients. Patients and families found that participating surgeons established appropriate expectations and provided clarity helpful in their decision-making process.32 Curtis et al randomized internal medicine residents and nurse practitioner students to either simulation-based communication training or usual education. The primary outcome, patient-reported quality of communication, was not significantly improved in the intervention group.33 These studies demonstrate the feasibility of incorporating high-level educational outcomes in SBME studies.

Beyond the results of our structured coding analysis, we identified four noteworthy themes below that seem important for educators interested in integrating simulation methods into PC education.

How SBME addresses specific challenges in PC education

Learners can be challenged in their comfort in learning difficult communication tasks, contending with both emotion and time pressures. For example, when tasked with delivering news of an amyotrophic lateral sclerosis diagnosis to an SP, medical students expressed feelings of powerlessness, described these conversations as emotionally draining, and some felt responsible for the diagnosis.34 At other times, the challenges were practical, such as feeling external pressures in a productivity-oriented environment.35

SBME has been shown to be effective in transcending some of these barriers by simulating real-life scenarios in a setting where learners feel safe to practice difficult skills. In a qualitative study with medical students, educators used a simulation laboratory experience to teach communication skills concerning cardiopulmonary resuscitation with patients and caregivers.36 In the debrief, students uniformly expressed feeling unwelcome to participate in discussions about death and dying on the wards. Some students reported that they had been asked to leave the room by patients and caregivers. Simulation experiences may offer students the opportunity to practice skills difficult in “real-life” clinical environments. Participants also reported that the robotic simulator increased the realism of the experience, which enhanced its value compared to role plays they previously experienced.

Much like their physician colleagues, nurses often struggle with feeling unprepared in end-of-life care.37 Venkatasalu et al compared simulation-based end-of-life care teaching with classroom-based end-of-life care teaching among 187 first-year nursing school students. Simulation-based teaching led to an improved emotional experience for nurses in their first clinical placement.38 The ability to meet the need for improved end-of-life nursing education is limited by lack of comfort in this area among many nursing instructors.39 SBME can be incorporated into nursing education to provide standardized opportunities for teaching and assessment of PC skills.

Framing and language

SBME is a useful method to study and teach provider communication behaviors because it allows researchers to replicate particular clinical scenarios in ways not possible in real patient encounters. Several studies investigated the language used in discussions with patients, as well as how treatment options were framed.40–42

When medical students in Switzerland discussed hepatic metastasis with SPs, they verified the SPs’ understanding of the terms “palliative” and “metastasis” in only 22% of interviews.40 Another study examined how preferences were elicited and options were framed during code status discussions (CSDs). Internal medicine residents were randomized to either a simulation-based CSD curriculum including an SP encounter or no CSD curriculum, and subsequently, all were tested with another SP CSD. Intervention residents were more likely than controls to explore patient values and goals and less likely to frame the decision as one to be made solely by the patient.41 A third study included hospitalist, EM, and critical care physicians in a technologically advanced robotic simulation with a family member of a critically ill patient. Participants broached life-sustaining treatment differently than treatment focusing on comfort, commonly framing life-sustaining treatment as necessary while framing comfort measures as optional.42 These studies demonstrate that SBME allows researchers to investigate communication behaviors in a standardized setting that does not interfere with patient care.

Controversy over fidelity

Classically, the term fidelity refers to the degree to which a simulator can reproduce a real-world environment.43 High fidelity is commonly used to describe SBME utilizing technologically advanced robotic simulators. However, as we identified in coding our studies, the operational definition of fidelity has its limitations. Hamstra et al argued that although fidelity is defined as the degree to which a simulation feels real, it does not necessarily capture the extent to which an experience assists learners in improving their skills. They recommend abandoning the term fidelity and substituting terms such as physical resemblance and functional task alignment.44 Rather than focusing on the fidelity of a simulation, Schoenherr et al45 argued that educators should identify what features of a simulator are critical to learning.

When developing PC skills training, educators must determine which methods will assist the learner in practicing and strengthening the skills of focus. For example, using a technologically advanced robot without a human SP to interact with would not be effective to teach empathic communication. Furthermore, the transfer of learning and level of fidelity were not strongly correlated when a high-fidelity mannequin was compared to low-fidelity methods in teaching skills such as auscultation, cardiopulmonary resuscitation, and certain surgical techniques.46 In spite of these nuances in terminology, learners have reported that technologically advanced simulation centers contribute to the realism of the experience.21 However, learners also reported that the addition of a human SP to a robotic simulator was what made the experience “realistic, powerful and moving”.47

Unique simulation methods

SBME allows PC educators to be innovative when designing educational experiences. We identified two unique simulation methods – the virtual patient and the sociodramatic method. Tan et al48 implemented a virtual patient case in a medical student clerkship to improve end-of-life care education. The “chart your own adventure”-style case guided students through a 4–6-month clinical course of a cancer patient from new bony metastases to hospice admission and death. Students experienced the simulation of the longitudinal care of a terminal patient, with opportunities to learn skills in symptom management and psychosocial support. Students’ knowledge scores increased significantly, and 91% of the students rated the realism as good to excellent.

Even more technologically innovative is the use of avatars to teach communication skills. Andrade et al29 investigated the application of avatars or graphical character representations to deliver bad news in a three-dimensional computer-generated simulated environment. Although learners’ self-efficacy ratings improved, they noted an inability to read non-verbal cues in the avatar SPs. A benefit of both these innovative methods includes the ability to manipulate characteristics of the patient and to provide distance learning.

Unlike the virtual patient, learners who participate in sociodrama rely heavily on physical expression and interactivity. Sociodrama is a type of role-play activity using group enactments of life situations to help deepen understanding of interpersonal conflicts.49 In a study by Baile and Walters,50 the only one in our sample to use sociodrama, role reversal methods helped learners understand hidden feelings of loss behind a family’s emotional reactions. Although these unique methods are rare, they represent examples of creative applications of SBME to PC education that may diversify learners’ experiences who are exposed primarily to SP encounters.

Limitations

Our study has several limitations. First, our exclusion criteria might contribute to publication bias, as reports in abstract form but not in full publication were excluded. However, we believe the full peer-reviewed vetting was important for inclusion. Second, as discussed earlier, we did not include certain variables of interest such as fidelity and study intent (formative vs evaluative) as we were unable to code them reliably based on information in the published reports. Perhaps, investigators can be mindful of this limitation in the literature and report more information regarding the intent of the SBME. Finally, as our unit of analysis was each SBME report, we reported on the frequencies of studies that included codes within each variable and could not comment on the overall percentages of the codes within each variable.

Future directions

Based on the findings of this review, we propose several recommendations for the future application of SBME in PC training. We believe SBME offers unique opportunities to teach PC skills and encourage educators to identify opportunities for integrating these methods in their curricula. We advocate for inclusion of learners from all specialties involved in the care of seriously ill patients with PC needs, in addition to advancing interprofessional training in SBME. We encourage educators to establish working relationships with simulation centers to develop SBME to teach and assess learners on symptom management, especially in the dying patient. For investigators, we advocate for SBME study design which includes more rigorous outcome assessments, consistent with higher levels of the Kirkpatrick model, rather than relying primarily on participant survey data, recognizing the challenges with collecting patient outcomes.

Conclusion

SBME in training of PC skills has advanced considerably over recent decades. Many successes have been documented, and many exciting opportunities lie ahead.

Acknowledgment

The authors thank Melissa Funaro (Harvey Cushing/John Hay Whitney Medical Library, Yale University, New Haven, CT, USA) for assisting with database search.

Disclosure

The authors report no conflicts of interest in this work.

References

Portenoy RK, Lupu DE, Arnold RM, Cordes A, Storey P. Formal ABMS and ACGME recognition of hospice and palliative medicine expected in 2006. J Palliat Med. 2006;9(1):21–23. | ||

Saunders C. The evolution of palliative care. J R Soc Med. 2001;94(9):430–432. | ||

WHO Definition of Palliative Care [homepage on the Internet]. World Health Organization. Available from: http://www.who.int/cancer/palliative/definition/en/. Accessed February 1, 2018. | ||

Kelley AS, Morrison RS. Palliative care for the seriously Ill. N Engl J Med. 2015;373(8):747–755. | ||

Kamal AH, Bull JH, Swetz KM, Wolf SP, Shanafelt TD, Myers ER. Future of the palliative care workforce: preview to an impending crisis. Am J Med. 2017;130(2):113–114. | ||

Lupu D. American academy of hospice and palliative medicine workforce task force estimate of current hospice and palliative medicine physician workforce shortage. J Pain Symptom Manage. 2010;40:899–911. | ||

Quill TE, Abernethy AP. Generalist plus specialist palliative care--creating a more sustainable model. N Engl J Med. 2013;368(13):1173–1175. | ||

Carroll T, Weisbrod N, O’Connor A, Quill T. Primary palliative care education: a pilot survey. Am J Hosp Palliat Care. 2018;35(4):565–569. | ||

Noguera A, Bolognesi D, Garralda E, et al. How do experienced professors teach palliative medicine in European universities? a cross-case analysis of eight undergraduate educational programs. J Palliat Med. 2018. | ||

Hauer J, Quill T. Educational needs assessment, development of learning objectives, and choosing a teaching approach. J Palliat Med. 2011;14(4):503–508. | ||

Gaba DM. The future vision of simulation in health care. Qual Saf Health Care. 2004;13 (1):i2–i10. | ||

McGaghie WC, Issenberg SB, Cohen ER, Barsuk JH, Wayne DB. Does simulation-based medical education with deliberate practice yield better results than traditional clinical education? A meta-analytic comparative review of the evidence. Acad Med. 2011;86(6):706–711. | ||

Moran ME. Enlightenment via simulation: “crone-ology’s” first woman. J Endourol. 2010;24(1):5–8. | ||

Barrows HS, Abrahamson S. The programmed patient: a technique for appraising student performance in clinical neurology. J Med Educ. 1964;39:802–805. | ||

Singh H, Kalani M, Acosta-Torres S, El Ahmadieh TY, Loya J, Ganju A. History of simulation in medicine: from Resusci Annie to the Ann Myers Medical Center. Neurosurgery. 2013;73(suppl_1):S9–S14. | ||

Harden RM, Stevenson M, Downie WW, Wilson GM. Assessment of clinical competence using objective structured examination. Br Med J. 1975;1(5955):447–451. | ||

Rosen KR. The history of medical simulation. J Crit Care. 2008;23(2):157–166. | ||

Abrahamson S, Denson JS, Wolf RM. Effectiveness of a simulator in training anesthesiology residents. J Med Educ. 1969;44(6):515–519. | ||

Faulkner A. Using simulators to aid the teaching of communication skills in cancer and palliative care. Patient Educ Couns. 1994;23(2):125–129. | ||

Pereira J, Palacios M, Collin T, et al. The impact of a hybrid online and classroom-based course on palliative care competencies of family medicine residents. Palliat Med. 2008;22(8):929–937. | ||

Walker LN, Russon L. Does simulation have a role in palliative medicine specialty training? BMJ Support Palliat Care. 2016;6(4):479–485. | ||

Saylor J, Vernoony S, Selekman J, Cowperthwait A. Interprofessional education using a palliative care simulation. Nurse Educ. 2016;41(3):125–129. | ||

Corcoran AM, Lysaght S, Lamarra D, Ersek M. Pilot test of a three-station palliative care observed structured clinical examination for multidisciplinary trainees. J Nurs Educ. 2013;52(5):294–298. | ||

de Giovanni D, Roberts T, Norman G. Relative effectiveness of high- versus low-fidelity simulation in learning heart sounds. Med Educ. 2009;43(7):661–668. | ||

Maloney S, Haines T. Issues of cost-benefit and cost-effectiveness for simulation in health professions education. Adv Simul. 2016;1:13. | ||

Schaefer KG, Chittenden EH, Sullivan AM, et al. Raising the bar for the care of seriously ill patients: results of a national survey to define essential palliative care competencies for medical students and residents. Acad Med. 2014;89(7):1024–1031. | ||

Arnold RM, Back AL, Barnato AE, et al. The Critical Care Communication project: improving fellows’ communication skills. J Crit Care. 2015;30(2):250–254. | ||

Hope AA, Hsieh SJ, Howes JM, et al. Let’s talk critical, development and evaluation of a communication skills training program for critical care fellows. Ann Am Thorac Soc. 2015;12(4):505–511. | ||

Andrade AD, Bagri A, Zaw K, Roos BA, Ruiz JG. Avatar-mediated training in the delivery of bad news in a virtual world. J Palliat Med. 2010;13(12):1415–1419. | ||

Kirkpatrick DL, Kirkpatrick JD. Evaluating Training Programs: The Four Levels. 3rd ed. San Francisco, CA: Berrett-Koehler; 2006. | ||

Trickey AW, Newcomb AB, Porrey M, et al. Two-year experience implementing a curriculum to improve residents’ patient-centered communication skills. J Surg Educ. 2017;74(6):e124–e132. | ||

Kruser JM, Taylor LJ, Campbell TC, et al. “Best Case/Worst Case”: Training surgeons to use a novel communication tool for high-risk acute surgical problems. J Pain Symptom Manage. 2017;53(4):e715:711–719. | ||

Curtis JR, Back AL, Ford DW, et al. Effect of communication skills training for residents and nurse practitioners on quality of communication with patients with serious illness: a randomized trial. JAMA. 2013;310(21):2271–2281. | ||

Schellenberg KL, Schofield SJ, Fang S, Johnston WS. Breaking bad news in amyotrophic lateral sclerosis: the need for medical education. Amyotroph Lateral Scler Frontotemporal Degener. 2014;15(1-2):47–54. | ||

Epstein RM, Duberstein PR, Fenton JJ, et al. Effect of a patient-centered communication intervention on oncologist-patient communication, quality of life, and health care utilization in advanced cancer: the voice randomized clinical trial. JAMA Oncol. 2017;3(1):92–100. | ||

Hawkins A, Tredgett K. Use of high-fidelity simulation to improve communication skills regarding death and dying: a qualitative study. BMJ Support Palliat Care. 2016;6(4):474–478. | ||

Cavaye J, Watts JH. End-of-life education in the pre-registration nursing curriculum: Patient, carer, nurse and student perspectives. J Res Nurs. 2012;17(4):317–326. | ||

Venkatasalu MR, Kelleher M, Shao CH. Reported clinical outcomes of high-fidelity simulation versus classroom-based end-of-life care education. Int J Palliat Nurs. 2015;21(4):179–186. | ||

Kopka JA, Aschenbrenner AP, Reynolds MB. Helping students process a simulated death experience: integration of an NLN ACE.S evolving case study and the ELNEC curriculum. Nurs Educ Perspect. 2016;37(3):180-182. | ||

Bourquin C, Stiefel F, Mast MS, Bonvin R, Berney A, Well BA. Well, you have hepatic metastases: use of technical language by medical students in simulated patient interviews. Patient Educ Couns. 2015;98(3):323–330. | ||

Sharma RK, Jain N, Peswani N, Szmuilowicz E, Wayne DB, Cameron KA. Unpacking resident-led code status discussions: results from a mixed methods study. J Gen Intern Med. 2014;29(5):750–757. | ||

Lu A, Mohan D, Alexander SC, Mescher C, Barnato AE. The language of end-of-life decision making: a simulation study. J Palliat Med. 2015;18(9):740–746. | ||

Levine A, Demaria S, Schwartz A, Sim A. editor. The Comprehensive Textbook of Healthcare Simulation. New York: Springer; 2014. | ||

Hamstra SJ, Brydges R, Hatala R, Zendejas B, Cook DA. Reconsidering fidelity in simulation-based training. Acad Med. 2014;89(3):387–392. | ||

Schoenherr JR, Hamstra SJ. Beyond fidelity: deconstructing the seductive simplicity of fidelity in simulator-based education in the health care professions. Simul Healthc. 2017;12(2):117–123. | ||

Norman G, Dore K, Grierson L. The minimal relationship between simulation fidelity and transfer of learning. Med Educ. 2012;46(7):636–647. | ||

Youngblood AQ, Zinkan JL, Tofil NM, White ML. Multidisciplinary simulation in pediatric critical care: the death of a child. Crit Care Nurse. 2012;32(3):55–61. | ||

Tan A, Ross SP, Duerksen K. Death is not always a failure: outcomes from implementing an online virtual patient clinical case in palliative care for family medicine clerkship. Med Educ Online. 2013;18(1):22711. | ||

Blatner A, Blatner A. Foundations of Psychodrama: History, Theory and Practice. 3rd ed. New York: Springer Publishing Company; 1988. | ||

Baile WF, Walters R. Applying sociodramatic methods in teaching transition to palliative care. J Pain Symptom Manage. 2013;45(3):606–619. |

© 2018 The Author(s). This work is published and licensed by Dove Medical Press Limited. The full terms of this license are available at https://www.dovepress.com/terms.php and incorporate the Creative Commons Attribution - Non Commercial (unported, v3.0) License.

By accessing the work you hereby accept the Terms. Non-commercial uses of the work are permitted without any further permission from Dove Medical Press Limited, provided the work is properly attributed. For permission for commercial use of this work, please see paragraphs 4.2 and 5 of our Terms.

© 2018 The Author(s). This work is published and licensed by Dove Medical Press Limited. The full terms of this license are available at https://www.dovepress.com/terms.php and incorporate the Creative Commons Attribution - Non Commercial (unported, v3.0) License.

By accessing the work you hereby accept the Terms. Non-commercial uses of the work are permitted without any further permission from Dove Medical Press Limited, provided the work is properly attributed. For permission for commercial use of this work, please see paragraphs 4.2 and 5 of our Terms.