Back to Journals » Advances in Medical Education and Practice » Volume 7

Promoting interprofessionalism: initial evaluation of a master of science in health professions education degree program

Authors Lamba S, Strang A, Edelman D, Navedo D, Soto-Greene M, Guarino A

Received 1 October 2015

Accepted for publication 11 November 2015

Published 5 February 2016 Volume 2016:7 Pages 51—55

DOI https://doi.org/10.2147/AMEP.S97482

Checked for plagiarism Yes

Review by Single anonymous peer review

Peer reviewer comments 2

Editor who approved publication: Dr Md Anwarul Azim Majumder

Sangeeta Lamba,1 Aimee Strang,2 David Edelman,3 Deborah Navedo,4 Maria L Soto-Greene,1 Anthony J Guarino4

1Department of Emergency Medicine, New Jersey Medical School, Rutgers, The State University of New Jersey, Newark, NJ, 2Department of Pharmacy Practice, Albany College of Pharmacy and Health Sciences, Albany, NY, 3Department of Surgery, Wayne State University School of Medicine, Detroit, MI, 4Health Professions Education Program, Massachusetts General Hospital Institute of Health Professions, Boston, MA, USA

Abstract: This survey study assessed former students’ perceptions on the efficacy of how well a newly implemented master’s in health professions education degree program achieved its academic aims. These academic aims were operationalized by an author-developed scale to assess the following domains: a) developing interprofessional skills and identity; b) acquiring new academic skills; and c) providing a student-centered environment. The respondents represented a broad range of health care providers, including physicians, nurses, and occupational and physical therapists. Generalizability-theory was applied to partition the variance of the scores. Student’s overwhelmingly responded that the program successfully achieved its academic aims.

Keywords: health professions education, program evaluation, and survey, development, master’s degree, interprofessional education, G-theory, faculty development, teacher training

Introduction

The Institute of Medicine (IOM) has declared that ‘‘health professionals should be educated to deliver patient-centered care as members of an interdisciplinary team …”1 The IOM has documented that patients are more likely to receive safe, quality care when health-care professionals communicate, and work together effectively. Consequently, interprofessional education (IPE) programs in Health Professions Education have expanded in number over the past 2 decades.2,3 Not surprisingly, perceived development needs of health educators tend to be similar.4 Health professionals that ultimately enroll in interprofessional degree programs seek to increase competency in practice, research, teaching, and patient care.5,6 There is a dearth of evidence of the effectiveness of these interprofessional health professions programs in the literature.7 One such program to develop IPE-ready educators is a newly implemented degree program leading to a master’s of science in health professions education with the following objectives: a) promoting an interprofessional identity; b) acquiring academic skills in teaching and scholarship; and c) providing a student-centered environment.6

The demand for graduate programs focused on education has grown exponentially in recent years following the release of the IOM recommendations.1,2,3,7 This master’s degree program shares the curricular outline with other programs, with the added elements of: 1) collaboration with two Boston-based organizations, the Harvard Macy Institute, and the Harvard Center for Medical Simulation; 2) a part-time approach for increased accessibility for working clinician educators; 3) the required teaching practicum; and 4) the explicit interprofessional aspect of the studies. In academic year 2014–2015, there were 35 enrolled students in the degree program with 21 faculty members (full-time and adjunct).

Selected students from across the health-care spectrum, including physicians, nurses, occupational and physical therapists, speech-language pathologists, and other credentialed health professionals proceed through the program as a collaborative cohort of health professionals learning and networking together in this “low residency” program. A low or limited residency program is a form of education (often master’s level such as master’s in business administration), usually at the university level, which involves some amount of distance education and brief on-campus activities that may last a weekend or several days. The students become part of a community of interprofessional scholars committed to effectively facilitate IPE upon completion.

Purpose

The primary purpose of this study was to assess former students’ perceptions on how well a newly implemented master’s of science in health professions education degree program achieved its academic aims. These academic aims were operationalized by an author-developed scale to assess the following domains: a) developing interprofessional skills and identity; b) acquiring new academic skills; and c) providing a student-centered environment.

Patients and methods

Participants and instrumentation

All students who had enrolled in the first two cohorts of the degree program were the target sample for this study. An author-created survey was developed to assess student responses to three program domain outcomes: a) developing interprofessional skills and identity (five items); b) acquiring new academic skills (five items); and c) providing a student-centered environment (five items), as well as some basic demographic information including reasons for enrolling in the program. All items were scored on a 5-point Likert-type scale with higher values indicative of greater levels. The survey was voluntary, anonymous, and administered on-line.

The developing interprofessional skills and identity domain was assessed by the following statements: 1) learning in an interprofessional manner has increased my knowledge of other health professions; 2) interprofessional nature of the program added to the value; 3) program increased my understanding of the educational challenges faced by other professions; 4) working with interprofessional scholars improved my interprofessional communication; and 5) communicating with interprofessional program scholars has improved my interprofessional relationships at my place of employment.

The domain of acquiring new academic skills included attitude and satisfaction statements to gather student perception on the program such as the following: 1) taught me useful academic skills; 2) taught me useful clinical skills; 3) I am more satisfied with my teaching skills; 4) I am more satisfied with conducting research; and 5) I am more satisfied with structuring of my courses.

The program’s student-centered environment domain was assessed using statements addressing their satisfaction such as “I am satisfied with”: 1) the program’s course contents; 2) learning activities; 3) organization; 4) online format; and finally 5) the overall program has met my expectations.

This project was approved by the Massachusetts General Hospital Institute of Health Professions IRB and the online survey participation was clearly identified as a voluntary and anonymous process.

Statistical analyses

All statistical analyses were conducted using IBM SPSS version 22.0 (IBM Corporation, Chicago, IL, USA) with alpha set at P<0.05. Descriptive statistics included measures of central tendency for continuous variables and frequency and proportions for categorical variables. Differences among domains and their respective items were assessed by conducting within-subjects analysis of variance (ANOVA). Generalizability-theory (G-theory) was conducted by IBM SPSS Variance Components procedure with the minimum norm quadratic unbiased estimator. G-theory is an extension of the classical test theory. In classical test theory, the score variance is partitioned into the true score and error portion. In other words, if a reliability coefficient equals 0.80, this would indicate that 20% of the score variance is due to error. In G-theory, the score variance is partitioned into many variance components. In our study the variance components were the following: a) resident; b) rater; c) item; d) resident by rater; e) resident by item; f) rater by item; and g) resident by rater by item. G-theory has the advantage of analyzing multiple sources of variability (including interaction effects) simultaneously in a single analysis.8 This provides the researcher insights into how to reduce the error portion of the true score.8,9 It is typically desirable for the following variances to be large: a) participant (also known as systematic variance); b) item; and c) domain, which indicate these component scores are able to differentiate the participants. It is also desirable that the components have smaller variances to indicate consistency among the interactions. Lastly, G-theory also produces an absolute (criterion-referenced) reliability coefficient (ie, Phi coefficient) and the relative (norm-referenced) reliability coefficient.8

Results

Participants

A sample of n=15 graduates responded to the survey, which represented a response rate of approximately 60%. The group was quite diverse in: a) years in academia (mean [M]=12.93, standard deviation [SD]=8.71, range 2–30); b) years in clinical practice (M=14.64, SD=9.77, range 4–35); c) time (years) since enrolled in a Formal Educational Program (M=11.20, SD=6.09, range 4–25); and age (M=44.40, SD=7.78, range 34–60). Professionally, their responsibilities (as measured in percent) were mostly: a) administrative (M=35, SD =24, range 2–85); while b) patient care (M=18, SD =18, range 0–50); c) clinical teaching (M=18, SD =22, range 0–90); d) academic teaching (M=15, SD =13, range 0–50); e) research (M=10, SD =8, range 0–25); and f) other occupied the remaining time. Thus, this program attracts diverse learners who are at various stages in their careers and who are committed to both academic and clinical education in their fields.

The three most cited reasons for enrolling in the degree program were: 1) self-development (92%); 2) increase in scholarship (88%); and 3) advancement in present career (75%). All participants also noted that the Harvard Macy Collaboration (credits offered toward the master’s degree program) was “extremely influential” in their decision to enroll in the degree program. Participants stated in the open-ended comments section that the Harvard Macy collaboration provided the ability to network with other educators. The quality and expertise of the diverse interprofessional faculty was cited with statements such as “expert cast of faculty from diverse backgrounds and countries” and “the ability to draw excellent speakers from many professions including business and engineering”.

The findings from the G-study demonstrated that survey scores were able to differentiate the participants, as evidenced by the large score variance of 42.59%, indicating that the participants perceptions varied from one another in their response scores. Likewise, the three-way effect of participant by domain by item, the two-way effect of domain by item, the one-way effect of domain all demonstrated relatively large variances indicating that the context of the item was interpreted differently among the participants. There was an impressive consistency among the following variance components: a) items; and b) the participant by item effect indicating that the participants’ interpretation to the items in each domain was consistent (Table 1).

| Table 1 Percent of effects |

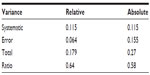

The relative error variance is the aggregate of the variance components that would affect the rank order of the scores. These components are (participant by domain), (participant by item), and (participant by domain by item) resulting in a relative error variance of 0.064. The total relative score variance is 0.170. The relative reliability coefficient is the ratio of the participants’ score variance (systematic error, 0.115) to the total relative error variance (0.179) produced a relative reliability coefficient of 0.64. The absolute error variance is the aggregate of all variance components because of their effect on the reliability of the scores. The aggregate of these components is 0.155. The total absolute score variance is 0.270, which produced an absolute reliability coefficient of 0.580 (Table 2).

| Table 2 Variance component effects |

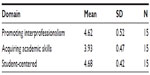

The descriptive statistics of the aggregated scores for the three domains: a) developing interprofessional skills and identity; b) acquiring new academic skills; and c) providing a student-centered environment are presented in Table 3. Results of the ANOVA indicated that both developing interprofessional skills and the program’s student-centered environment domains were rated significantly greater than acquiring new academic skills. There were no differences between promoting interprofessionalism and the program’s student-centered environment.

| Table 3 Descriptive statistics |

A series of repeated measures ANOVA were conducted to assess any significant differences among the items in each domain. The results of the items comprising the domains are presented in Table 4.

| Table 4 Item descriptive statistics |

Results detected that only improvement in interprofessional relationships at place of employment was rated significantly lower than the other four items. There were no differences among the other items. Both academic skill development and structuring of courses were rated significantly greater than the other three items. Not surprisingly, clinical skill development was rated significantly lower than all the other items.

There were no significant differences among the student-centered items.

Discussion

Findings of our study support the efficacy of the degree program’s domain outcomes as indicators. Students reported very high degrees of satisfaction with the program, with 96% reporting the program “met their needs”. There were no practical significant differences among the domains: a) developing interprofessional skills and identity; b) acquiring new academic skills; and c) providing a student-centered environment. It was not surprising that these scholars reported that the interprofessional nature of the program was valuable. As calls for the need to create interprofessional learning experiences in the prelicensure level increase, arming current and future educators across professions with not only common understandings of the learning experiences, but common competencies as educators will become essential.10 While clinician competencies to practice in an interprofessional manner are becoming more precisely defined,10 few master’s degree programs include this component in their curricula.11,12 As such, this is an area in which the uniqueness of this program has the potential to thrive. Additionally, this program was well situated within an institution of higher education, which values interprofessional skills and competencies, giving it unique entree into this relatively new arena of building IPE leadership for health profession educators.13

Medical educators may seek formal master’s degree programs in health professions education in order to develop a deeper understanding of educational theory, research, and practice. Our findings indicate that the respondents reported high scores in successfully developing new skills in academic development, teaching, and course structure based on participation in this degree program. At the same time, relatively lower scores were reported for skills developed in the clinical and research domains. Few of the participants reported seeking clinical teaching skills in this program, while there was a significant group that reported a shortfall in their own growth as a researcher. This became the focus for the next phase of curriculum development for the program. Since then, the program has addressed this identified need by adding advanced courses in measurement and advanced qualitative research methods, which became a new concentration in research methodologies. This finding may also reflect the fact that research productivity is a slower process and may take longer to realize as compared to learning and applying a new teaching skill. Future research warrants investigating this hypothesis. These results need to be viewed cautiously not only because of the small sample but also with the realization that the program’s curricula are stabilizing and evolving. Future research will be conducted to assess the long-term program effects and impact on the first generation of graduates and on the feedback of current students.

Limitations

The small cohort of program registrants in the inaugural class chose to attend the interprofessional master degree program and hence, there may exist a selection bias. We use a nonvalidated author-created survey.

Conclusion

With the growth of master’s degree programs for health professions education, there is a real opportunity to develop IPE-ready interprofessional educators. Student and alumni surveys can serve as integral parts of an overall program evaluation plan, and can inform strategic growth and new offerings. For this novel master’s degree program, participants expressed a high level of satisfaction with the overall program. Our findings support the efficacy of the program’s domain outcomes, including development of an interprofessional identity as a health profession educator.

Disclosure

The authors report no conflicts of interest in this work.

References

Greiner AC, Knebel E, editors. Health Professions Education: A Bridge to Quality. Institute of Medicine Committee on the Health Professions Education Summit. Washington, DC: National Academy Press; 2003. | |

Lewis KO, Baker RC, Bratigan DH. Current practices and needs assessment of instructors in an online master’s degree in education for healthcare professionals: a first step to the development of quality standards. J Interac Online Learn. 2011;10(1):49–63. | |

Cohen R, Murnaghan L, Collins J, Pratt D. An update on master’s degree in medical education. Med Teach. 2005;27(8):686–692. | |

Schönwetter DJ, Hamilton J, Sawatzky JA. Exploring professional development needs of educators in the health sciences professions. J Dent Educ. 2015;79(2):113–123. | |

Olsson M, Persson M, Kaila P, Wikmar LN, Boström C. Students’ expectations when entering an interprofessional master’s degree program for health professionals: a qualitative study. J Allied Health. 2013;42(1):3–9. | |

Cable C, Knab M, Tham KY, Navedo DD, Armstrong E. Why are you here? Needs analysis of an interprofessional health-education graduate degree program. Adv Med Educ Pract. 201411;5:83–88. | |

Baker RC, Lewis KO. Online master’s degree in education for healthcare professionals: early outcomes of a new program. Med Teach. 2007;29(9):987–989. | |

Shavelson RJ, Webb NM. Generalizability Theory: A Primer. Newbury Park, CA: Sage Publications; 1991. | |

Guler N, Gelbal S. Studying reliability of open ended mathematics items according to the classical test theory and generalizability theory. Educ Sci Theory Prac. 2009;10(2):1011–1019. | |

Interprofessional Education Collaborative (IPEC) Expert Panel. Core Competencies for Interprofessional Collaborative Practice: Report on an Expert Panel. Washington, DC: Interprofessional Education Collaborative; 2011. | |

Tekian A, Harris I. Preparing health professions education leaders worldwide: a description of masters-level programs. Med Teach. 2012; 34(1):52–58. | |

Tekian A, Artino A. AM last page: master’s degree in health professions education programs. Acad Med. 2013;88(9):1399. | |

Tekian A, Roberts T, Batty HP, Cook DA, Norcini J. Preparing leaders in health professions education. Med Teach. 2014;36:269–271. |

© 2016 The Author(s). This work is published and licensed by Dove Medical Press Limited. The full terms of this license are available at https://www.dovepress.com/terms.php and incorporate the Creative Commons Attribution - Non Commercial (unported, v3.0) License.

By accessing the work you hereby accept the Terms. Non-commercial uses of the work are permitted without any further permission from Dove Medical Press Limited, provided the work is properly attributed. For permission for commercial use of this work, please see paragraphs 4.2 and 5 of our Terms.

© 2016 The Author(s). This work is published and licensed by Dove Medical Press Limited. The full terms of this license are available at https://www.dovepress.com/terms.php and incorporate the Creative Commons Attribution - Non Commercial (unported, v3.0) License.

By accessing the work you hereby accept the Terms. Non-commercial uses of the work are permitted without any further permission from Dove Medical Press Limited, provided the work is properly attributed. For permission for commercial use of this work, please see paragraphs 4.2 and 5 of our Terms.