Back to Journals » Clinical Interventions in Aging » Volume 9

Acceptance of an assistive robot in older adults: a mixed-method study of human–robot interaction over a 1-month period in the Living Lab setting

Authors Wu Y ![]() , Wrobel J, Cornuet M, Kerhervé H, Damnée S, Rigaud A

, Wrobel J, Cornuet M, Kerhervé H, Damnée S, Rigaud A

Received 23 October 2013

Accepted for publication 19 December 2013

Published 8 May 2014 Volume 2014:9 Pages 801—811

DOI https://doi.org/10.2147/CIA.S56435

Checked for plagiarism Yes

Review by Single anonymous peer review

Peer reviewer comments 3

Ya-Huei Wu,1,2 Jérémy Wrobel,1,2 Mélanie Cornuet,1,2 Hélène Kerhervé,1,2 Souad Damnée,1,2 Anne-Sophie Rigaud1,2

1Hôpital Broca, Assistance Publique – Hôpitaux de Paris, 2Research Team 4468, Faculté de Médecine, Université Paris Descartes, Paris, France

Background: There is growing interest in investigating acceptance of robots, which are increasingly being proposed as one form of assistive technology to support older adults, maintain their independence, and enhance their well-being. In the present study, we aimed to observe robot-acceptance in older adults, particularly subsequent to a 1-month direct experience with a robot.

Subjects and methods: Six older adults with mild cognitive impairment (MCI) and five cognitively intact healthy (CIH) older adults were recruited. Participants interacted with an assistive robot in the Living Lab once a week for 4 weeks. After being shown how to use the robot, participants performed tasks to simulate robot use in everyday life. Mixed methods, comprising a robot-acceptance questionnaire, semistructured interviews, usability-performance measures, and a focus group, were used.

Results: Both CIH and MCI subjects were able to learn how to use the robot. However, MCI subjects needed more time to perform tasks after a 1-week period of not using the robot. Both groups rated similarly on the robot-acceptance questionnaire. They showed low intention to use the robot, as well as negative attitudes toward and negative images of this device. They did not perceive it as useful in their daily life. However, they found it easy to use, amusing, and not threatening. In addition, social influence was perceived as powerful on robot adoption. Direct experience with the robot did not change the way the participants rated robots in their acceptance questionnaire. We identified several barriers to robot-acceptance, including older adults’ uneasiness with technology, feeling of stigmatization, and ethical/societal issues associated with robot use.

Conclusion: It is important to destigmatize images of assistive robots to facilitate their acceptance. Universal design aiming to increase the market for and production of products that are usable by everyone (to the greatest extent possible) might help to destigmatize assistive devices.

Keywords: assistive robot, human–robot interaction, HRI, robot-acceptance, technology acceptance

Introduction

Robots have been proposed as one form of assistive technology likely to have much potential to support older adults, maintain their independence, and enhance their well-being.1–4 Enthusiasm for developing robotic technologies to assist the elderly is linked with the belief that there is a societal need (an aging society with few human caregivers available to care for the elderly) to be met by these technological innovations, which could save costs for public services or care-assurance budgets.5 According to Broekens et al6 robot research in eldercare includes two kinds of assistive robots, namely rehabilitation robots and social robots. The assistive robots for rehabilitation emphasize physical assistive technology, while assistive social robots concern systems that can be perceived as social entities with communication capacities. Among assistive social robots in eldercare, we can distinguish two categories: 1) pet-like companionship robots, whose main function is to enhance health and psychological well-being; and 2) service-type robots, whose main function is to support daily activities so that independent living is possible.

There is growing interest in investigating attitudes toward robots and their acceptance by older adults. Understanding why they reject or accept assistive robots is important, both for improving robot design and elaborating diffusion strategies in order to maximize their uptake. Studies investigating robot-acceptance in older adults involve participants interacting with a robot during a period of time (from several minutes to several days). Robot-acceptance is measured by different kinds of questionnaires and/or interviews.

Kuo et al7 used blood pressure monitoring in the service scenario to investigate the differences between two age-groups (40–65 years [n=29] versus >65 years [n=28]) in attitudes and reactions before and after their interactions with a mobile robot capable of measuring blood pressure. They found few differences between the two age-groups. However, a significant sex effect was found, as males had a more positive attitude toward robots in health care. Although participants of both sexes rated the performance of the robot highly, they expressed desires to have more interactivity and a better voice from the robot. Broadbent et al8 compared attitudes and reactions of 57 participants aged over 40 years who had their blood pressure taken by a medical student and by a robot. The results showed that there were no significant differences between the participants’ blood pressure levels or pulse taken by the robot and the medical student, suggesting that robot use in this kind of health care task is appropriate. However, the participants felt more comfortable with the medical student, and considered him/her to be more accurate. Neither age nor sex but initial attitudes and emotions toward robots were significant predictors of quality of interaction with the robot. Stafford et al9 investigated whether older people’s attitudes toward robots and their perceptions of the robot’s mind could predict the use of a health care robot in a retirement village. Over a 2-week period, a mobile robot was placed in a retirement village, where 25 older people were invited to use the robot’s several functions (vital-sign measurement, medication reminding, fall detection, entertainment, telephone calling, brain fitness, and games). Of the 25 residents, only eleven used the robot over the 2-week trial period. Age, sex, and education were not related to residents’ choice to use the robot. Compared to residents who did not use the robot, those who did had significantly more computer experience, better attitudes toward robots, and perceived robot minds to have less agency (capacity for self-control, morality, memory, emotion, recognition, planning, communication, and thought). Furthermore, among robot users, it was also found that attitudes toward robots improved over time. Seelye et al3 studied the feasibility of use and acceptance of a remotely controlled robot with video-communication capability in independently living, cognitively intact older adults. The robot was placed in the homes of eight seniors for 2 complete days. During that time, they received daily calls from the research team and up to two additional calls daily from a family member or friend who was trained in the use of the device. Overall, the results showed that participants appreciated the potential of this technology to enhance their physical health and well-being, social connectedness, and ability to live independently at home. Participants also voiced little concern about privacy, although they expressed the wish to have control over who was able to contact them through the device. It is worth noting that one participant who later progressed to a diagnosis of mild cognitive impairment (MCI) responded negatively to the robot. The authors found that difficulties maneuvering the robot around the environment were possible barriers to acceptance of the device. Heerink et al10 developed and validated a new theoretical model of assistive social agent acceptance, adapted from the unified theory of acceptance and use of technology model. They used controlled experiments with longitudinal data collected regarding three different social agents at elderly care facilities and at the homes of older adults. They found that the influential strength of the processes leading to acceptance differed between systems. It was revealed that “perceived usefulness” and “attitude” are the most significant influences on intention to use a robot or a screen agent appearing in a computer display.

According to the literature, the most consistent finding is that acceptance or adoption of a robot could be predicted by positive attitudes toward it.10,11 Further, attitudes toward a robot could be enhanced by direct experience with it. Researchers have suggested that robot-acceptance should be measured over a longer usage period, because people become familiarized with robots and can give a more informed impression of their actual use.2,10 Therefore, in the present study, we aimed to study robot-acceptance in older adults and the effect of direct experience with a robot over a 1-month period on robot-acceptance. We also compared older adults with MCI to cognitively intact healthy (CIH) adults on robot use and robot-acceptance. Older adults with MCI were expected to have more difficulties than CIH older adults in learning to use a robot. We explored if MCI subjects would have more negative reactions to a robot, as shown in the study of Seelye et al.3

The project was approved by the local ethics board, the Comité Consultatif sur le Traitement de l’Information en Matière de Recherche dans le Domaine de la Santé and the Commission Nationale Informatique et Liberté.

Subjects and methods

Participants

Eleven older adults were contacted by telephone from a list of volunteers (composed of patients attending the memory clinic and older adults recruited from associations) who had previously agreed to be participants in research studies led in Broca Hospital. The participants were informed about the study in writing and guaranteed anonymity and confidentiality. Return of a signed agreement was considered to be informed consent to participate in the study. The age range of participants was between 76 and 85 years, with an average of 79.3 years. There were nine females and two males. The sample had high educational levels, as most of them (nine of eleven) had received a college degree, one had received a high school diploma, and one attained secondary education. Six of them were diagnosed with MCI, according to Petersen’s criteria,12 and five were CIH adults. Mann–Whitney U-tests showed that the two groups did not significantly differ on age (P=0.71) or educational level (P=0.27), but differed significantly on computer experience (P=0.03). All the CIH participants used a computer regularly, while two MCI participants had never used a computer.

Kompaï robot

Kompaï (ROBOSOFT SA, Bidart, France) is an indoor mobile platform with two propulsive wheels used as a generic platform and designed to ease the development of advanced robotics solutions. It can recognize and synthesize voices, and navigate in unknown environments. It also remembers appointments, manages shopping lists, plays music, and can be used as a video conference system. It is equipped with an embedded controller running Windows CE, and with a tablet PC running Windows Vista or 7 (Microsoft Corporation, Redmond, WA, USA) for high-level applications.

Users interact with the robot via touch screen and voice. The robot can recognize and respond to simple phrases and orders, such as “Hello”, “What date is it today?”, “Go to the living room [or another room]”, “What are my appointments today?”, etc.

Procedure

Participants were invited to come to the LUSAGE Gerontechnology Living Lab (located in a building of the Broca Hospital, Paris, France) to interact with a robot called Kompaï (Figure 1) once a week for 4 weeks. The duration of each session was approximately 1 hour. An informed consent was signed by all of the participants before partaking in this experiment. Two experimenters were present in each session: one guided participants to interact with the robot, administered the questionnaires, and conducted interviews, while the other provided technical assistance.

| Figure 1 Interaction between a participant and Kompaï. |

During the first session, we introduced Kompaï to the participants. Kompaï greeted participants by saying “Hello, I am Kompaï.” The robot’s main functions, represented by nine icons displayed on the menu page of the touch screen of the robot, were presented: messaging service, weather consulting, online grocery shopping, Internet, Skype, calendar with event reminder, medication reminder, robot navigation, and cognitive games. Participants were then shown how to use the robot, based on a scenario.

For the remaining sessions, participants were asked to perform a series of tasks to simulate robot use in everyday life (eg, to check calendar, to add an appointment, to play cognitive games, etc) according to a predefined scenario in which task difficulty increased throughout the sessions. Participants could interact with the robot via touch screen or voice with simple phrases. As the robot responded to a limited number of oral commands, participants received a sheet on which the standard sentence to pronounce was written.

At the end of the first and the fourth sessions, we administered the robot-acceptance questionnaire and then conducted a semistructured interview. Usability-performance measures were carried out at the end of the third and the fourth sessions. After the 1-month interaction with the robot, a focus group was organized.

Measures

Robot-acceptance questionnaire

To evaluate robot-acceptance, we develop a 25-item questionnaire (Table 1), based on an adapted model of the unified theory of acceptance and use of technology, proposed by Heerink et al.10 We first translated the questionnaire developed by Heerink et al and then administered it to five older adults. According to their feedback, we selected the most relevant items, reformulated some phrases, and added some new items. The final questionnaire allowed the capturing of the following factors on robot-acceptance: intention to use, perceived usefulness, perceived ease of use, perceived enjoyment, perceived sociability, attitude towards robots, anxiety, social influence, and images of an assistive robot. Participants were asked to indicate their level of agreement to 25 statements on a 5-point Likert-type scale with anchors of “strongly agree” to “strongly disagree”. In this five-grade system, scores of 0 and 1 indicated poor willingness or satisfaction, a score of 2 indicated fair willingness or satisfaction, a score of 3 indicated good willingness or satisfaction, and a score of 4 indicated excellent willingness or satisfaction.

| Table 1 Robot-acceptance questionnaire |

Usability-performance measures

To study if participants could learn how to use the robot, we recorded time and number of errors and aids required in realizing ten tasks (to look up calendar, to program an appointment in calendar, to check emails, to write an email, to prepare a shopping list, to check weather forecast, to play a cognitive game, to check medication reminder, to make a Skype video conference call, and to activate a music-broadcast program). Performances at the third session after training were compared to those at the beginning of the fourth session. By doing so, we could evaluate the memorability of the system (how easily participants could reestablish proficiency when they use a system again after a period of not using it).

Semistructured interview

We conducted semistructured interviews at the end of the first and last sessions (after administration of the robot-acceptance questionnaire) to explore more deeply participants’ interaction experience with the robot and their willingness to adopt an assistive robot. The following is the guide of questions used for interviews.

- What do you think about this experiment?

- What do think about the appearance of the robot?

- What do you think about interaction with the robot?

- What do you think about having this type of robot one day?

- Would you use this kind of robot one day?

Focus-group discussions

A focus group was organized, during which we presented to participants study results and emergent themes from semistructured interviews for validation. By seeking the participants’ views on the honesty and consistency of the research findings, this approach allowed us to judge the credibility of our findings.13 Participants could confirm the researcher’s interpretation and provide additional insight.14

Analyses

For the robot-acceptance questionnaire and performance measures, we first performed descriptive analysis. Nonparametric statistical analysis was conducted, due to the inability to assume normal distribution with the small sample size. Comparisons between groups (MCI versus CIH subjects) were determined using the Mann–Whitney U-test, and comparisons within groups (first session versus fourth session) were determined using the Wilcoxon matched-pairs test. All tests were two-sided, and P-values below 0.05 were considered to denote statistical significance. All the statistical analyses were performed using SPSS 17.0 (IBM Corporation, Armonk, NY, USA) software.

Semistructured interviews were audiotaped and then transcribed. Then, the analyses of the transcripts were performed according to inductive thematic analysis.15 After the researchers were familiarized with the data and then generated initial codes for the data, a number of common emerging themes and issues were identified from the ideas expressed by participants during interviews.

Results

Robot-acceptance questionnaire

At the first session, most of the dimensions of robot-acceptance were scored lowly to moderately by participants (Table 2). Indeed, participants gave low scores in the following dimensions: intention to use, perceived usefulness, attitudes toward robots, and images of an assistive robot (reverse-scored). However, the dimensions ease of use, social influence, perceived enjoyment and anxiety (reverse-scored) were relatively highly scored. There were no significant differences between the MCI and CIH groups in scores of dimensions of robot-acceptance.

When comparing the scores at the fourth session to those at the first session, we did not find significant differences. Participants did not rate the robot-acceptance questionnaire differently from the first to the fourth session in either group. However, in the CIH group, there was a tendency (P=0.07) toward a decrease of scores in the “attitudes toward robots” dimension.

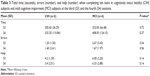

Performance measures

There were no significant differences between groups on task-completion time, errors, or help at the third and fourth interaction sessions (Table 3). Analyses within groups indicated that from the third to the fourth session, only completion time increased significantly in the MCI group (P=0.028). Errors (P=0.27) and help (P=0.67) in the MCI group, and completion time (P=0.50), errors (P=0.79), and help (P=0.59) in the CIH subjects remained unchanged.

Semistructured interviews

Three major identified themes allow us to capture the attitudes and willingness of participants to adopt an assistive robot: interaction experience with the robot, intention to use an assistive robot, and barriers to acceptance of an assistive robot. A summary of the three major themes and subthemes is presented in Table 4.

| Table 4 Summary of major themes and subthemes of interview results |

Theme 1: Interaction experience with the robot

All of the participants found this experience interesting, nice, and even fascinating. The direct experience with the robot allowed participants to discover an assistive robot, its functioning and functions, and its usefulness. This allowed them to gain some knowledge about technological progress.

It interested me. It also interested my friends. They asked me how I’d come to do this … It interested me to get to know a material that I didn’t know before … to see the possibilities offered by this material. (S11, MCI, aged 76 years)

Most of the participants (n=7) liked the appearance of the robot, and considered it pleasant, nice, and pretty.

I find it nice and amusing. It’s funny. It adds a little spice to life. (S3, CIH, aged 78 years)

While two participants found this stylish humanoid robot too machine-like, lacking some human-like features, a participant found it ridiculous that a robot should look like a human being.

Imitation of the human head is ridiculous. It must be more abstract. A human head … it’s not a human … why does it copy a human head? It seems to me that this imitation is not necessary. (S9, MCI, aged 80 years)

Some participants (n=5) found it amusing and fun to interact with the robot by voice and by touch screen. However, there were a lot of criticisms toward the voice control, which did not work very well because of technical problems. The frequent failures to interact with the robot via the voice control frustrated participants, even though they understood that the robot was only an imperfect prototype. Three participants found it difficult and not spontaneous to talk to the robot, and preferred interacting with it via touch screen.

It doesn’t seem to me indispensable that the robot talks … I found it very difficult to talk to a robot. This is because I am used to typing on a computer keyboard. It’s not spontaneous for me to … I don’t have the impression that this is a spontaneous way to … but it’s also a question of habit of doing things. (S11, MCI, aged 76 years)

The fact that the robot could talk and react to voice gave participants the impression of exchanging with it, even if this kind of exchange was very limited. Two participants found the robot “cold” and less amusing in the long run because of a lack of “spontaneity”.

There was a kind of exchange. This was not an affectionate exchange, such as an exchange with a human being, but this allowed communicating with the outside world. It works as a mediator. We don’t ask much from it. Fundamentally, it’s just a robot. (S8, MCI, aged 76 years)

For me, personally, I find it cold. It’s nice but cold. I had to send it information so that it could react. It’s an ice cube … I had to give it current so that it could react. (S2, MCI, aged 77 years)

Most of the participants (n=7) highlighted the importance of familiarization and support to use the robot. At the end of the fourth session, most of the participants (n=8) found it easy to manipulate the robot. However, three participants in the MCI group still found it difficult to use the robot. They reported a lack of motivation or cognitive difficulties that hindered them from learning how to use the robot.

I don’t like this … It isn’t suitable for me … It isn’t suitable for people who lose rapidity. I need more time to look around … it’s the same thing as my computer. I have to pay much attention. (S1, MCI, aged 79 years)

The robot was considered a useful aid for people with a handicap or those who are alone. It can provide a sort of company and presence, is available around the clock, and provides a certain degree of security. An assistive robot could be proposed to those who consider the presence of human aid at home as an intrusion. However, some participants (n=5) raised the issue of added values of the robot in comparison with other types of technological devices with similar functionalities.

When we become old and need assistance, it’s not pleasant to have someone at home … With such a robot, I would feel more secure and could communicate wherever I’m … I don’t like to have someone who tells me to do this and that. (S10, CIH, aged 79 years)

If we have a computer, we don’t need it [robot]. If we have a computer, we have the same things and we know how to use it. (S4, CIH, aged 76 years)

Theme 2: Intention to use an assistive robot

Unanimously, all participants reported that they did not intend to use an assistive robot at the moment because they were still independent.

An assistive robot … not for the moment, but if I were handicapped, maybe it would interest me. (S8, MCI, aged 76 years)

As to robot use in the future, most of the participants (n=7) were not enthusiastic. We could distinguish five types of responses to the question, “Do you think that you will use this kind of robot one day?”

Only four participants expressed a differed acceptance of this kind of robot in the future in case of a loss of autonomy.

If I were no longer well and able, if I had difficulties to move or in the case that I were sick or couldn’t go out, it could help me. But presently, I do everything normally. (S3, CIH, aged 78 years)

Two participants considered the robot a last resort.

If there were no other choices … yes … I would accept it, but not with pleasure … It’s nice and cute, but it doesn’t attract me. I like the warmth of human-beings. (S2, MCI, aged 77 years)

One participant considered that she was too old to learn how to use the robot.

Given my old age, I know nothing about how to manipulate the robot … I don’t think that I have the time to. (S6, MCI, aged 85 years)

Another one said that she could not answer this question presently, and that she would ponder over it.

For the moment, it’s difficult to … maybe in 1 or 2 years … I don’t know. This is an issue on which I have to reflect. (S5, CIH, aged 83 years)

Finally, three participants rejected definitively this kind of assistance.

I’ve told you more than once about this … I don’t plan to use it, even if in 10–15 years I am in a wheelchair. No … I regret this … even though I’ve told myself not to have a mental block over it … but when I was home, I told myself “What a strange idea to do something like this.” (S9, MCI, aged 80 years)

Theme 3: Barriers to acceptance of an assistive robot

Overall, participants did not consider themselves potential users of an assistive robot for the moment. We identified three barriers to acceptance of this kind of robot. First, for all the participants, the use of an assistive robot was associated with negative aspects or representations of aging (lonely/alone, dependent), which corresponded neither to their actual condition nor to their own identity. For them, this kind of robot was reserved to help those who are dependent, handicapped, no longer well and able, or who suffer from absolute solitude. All of them reported that they were not yet in the situation of needing an assistive robot.

Not for now, but in the future, if I was handicapped, if I couldn’t use my computer anymore. It’s easier to use than a computer. We don’t have to move. (S3, CIH, aged 78 years)

For two participants, an assistive robot evoked in them distressfully that one day they might become dependent, a condition perceived as threatening and difficult to cope with. Participants try to resist entering this stage as long as possible, envisaging it with fear.

Would you use this kind of robot in the future? (Investigator)

I refuse to think about it. It’s dependence. I don’t want to become dependent. (S2, MCI, aged 77 years)

What makes you reluctant toward this kind of robot? (Investigator)

To think of becoming dependent. The heart of the issue is to use the robot when we begin to lose our independence. For us, it’s a hurdle to pass, and this isn’t obvious … We didn’t have a robot as a toy or as other things. For me, a robot is associated with an onset of dependence. It’s a passage … We can’t imagine how we become dependent. In our association, we do everything to distance ourselves from the image of dependence. We know that we are likely to encounter it, but we do everything to push it back as long as possible. (S5, CIH, aged 83 years)

Second, many participants (n=8) mentioned that they belong to a generation who are not familiar with and do not get easily used to technologies. Some of them even reported an aversion toward machines and computers. Robot use was not compatible with their “generational habitus”. They thought that new cohorts of older people would be less reluctant toward robots, because they are more familiar with information and communication technology (ICT) and will adopt new ICT products more easily.

Those who are younger than me and who have machines with them won’t react in the same way as me … People in my generation don’t get used to things like this. I emphasize that it’s about my generation … Those who have home automation systems won’t show such resistance. (S5, CIH, aged 83 years)

Finally, three participants raised ethical and societal issues in relation to robot use. They reported that they would have the impression they were being followed and watched, and there would be a risk of invasion of privacy. They were concerned about a future in which humans communicate and share their lives with robots.

There is a function of Skype videoconference call on the robot. I’ve proposed Skype to my daughter, but she refused it because she didn’t want other people to see family pictures on the Internet … What will happen to robot users who are likely to be fragile? … they will be identified on the Internet … it’s necessary to enforce security on this. (S4, CIH, aged 76 years)

For me, this will be a catastrophic society … to see people talk to their robots … (S7, CIH, aged 83 years)

Focus group

Seven participants (three MCI and four CIH subjects; two men and five women) took part in the focus group after four interaction sessions. They validated the three major themes and their subthemes of interview results. Some additional issues were raised during the discussions.

- Funding of the cost of an assistive robot: Will pension funds partially finance its purchase? Is it more reasonable to rent than to buy this kind of robot?

- Maintenance of robots and technical support: What happens if a robot breaks down? Is there always someone available to assist a robot user to troubleshoot?

Also, some ethical issues were raised on robot use in eldercare.

- Does robot use actually promote the autonomy of a person? According to “use it or lose it” logic,16 if a robot does things for its user, does the user run the risk of losing some capacities because he/she does not make any effort to call for them?

- Could robot use lead to a decrease of social contact in older people? The presence of a robot could reassure children about the security of their old parents. However, this would become an excuse for children to reduce their visits. Furthermore, participants criticized the economic logic that consists in promoting robots to assist older people in order to cut down the cost of human interventions. They worried about dehumanization of our society if robots take up the tasks that only humans are supposed to do. Some participants claimed that it is necessary to encourage our society to develop programs that develop the skills of human caregivers.

Discussion

The aim of the study was to investigate acceptance of an assistive robot in older adults and the effect of direct experience with a robot over a 1-month period on its acceptance. A mixed-method approach containing a questionnaire, a performance-based measure, semistructured interviews, and a focus group was used.

Concerning the usability of the robot, there were no significant differences between CIH participants and MCI subjects on performance measures. However, MCI subjects needed more time to perform tasks after a 1-week period of not using the robot. These results suggest that both groups could learn and remember how to use the robot, but MCI participants might encounter more difficulties. This finding corresponds with interview data, in which three MCI participants reported difficulties in using the robot after four interaction sessions.

As for robot-acceptance, at the first session, both groups rated similarly on dimensions of robot-acceptance. Participants showed low intention to use an assistive robot and negative attitudes toward it, as well as negative images of it. They did not perceive it as useful. However, they found the robot easy to use, amusing, and not threatening. Further, social influence was perceived as quite powerful on robot adoption. This suggests that robot uptake in older adults could be facilitated by their children or health professionals who encourage them to use this kind of device. Direct experience with the robot did not change the way participants rated dimensions of robot-acceptance. Nonetheless, participants in the CIH group tended to show less positive attitudes toward an assistive robot. This finding conflicted somewhat with that of studies showing that older adults’ attitudes toward a technology (computer, robot) could be improved over time through direct experience with it.9,11

Analyses of qualitative data corroborated those from the questionnaire, and allowed in-depth understanding of attitudes and willingness of older adults to adopt an assistive robot, which did not differ between people with MCI and CIH elderly, who were not influenced by direct experience with the robot. Participants as a whole rated this experience positively, because it allowed them to discover the robot and how technological progress could be applied to serve as human assistance. High levels of satisfaction with this experiment contrast sharply with low intention of using a robot. Indeed, none of the participants expressed an intention to use an assistive robot presently, because they did not consider themselves in the situation of needing it. Only a few of them expressed an outright intention to use a robot in the future. A majority of them showed hesitation, and even rejection. Barriers to robot-acceptance were identified.

The participants belong to a generation who are not familiar with and do not easily get used to technologies. Indeed, research has shown that cohort is a factor that can influence the decision to use technologies.17 Robotics, just like computers, stands for them as a form of new technologies to which they were exposed very late in the course of their lives. For some older adults, it is important to learn how to use new technologies in order not to feel alienated from modern society. Others showed a lack of interest or motivation,18–20 even reluctance, toward technology, due to a fear of dehumanization of our society.

In addition to barriers related to adoption of new technologies in older people, “stigma” embodied by an assistive robot or other assistive devices constitutes an important barrier to their acceptance.5,21–23 Assistive technologies designed to facilitate autonomy are often seen as a sign of decline or handicap. For participants, the only condition to use an assistive robot was when one becomes dependent, a condition seen as unacceptable. An assistive robot conveys images of dependence and solitude, from which the elderly tend to distance themselves.24 Therefore, the stigma associated with the use of an assistive robot could lead to a decision to hold off using one.

Some researchers suggested that persisting uneasiness among older people concerning e-technologies might be due to disturbing awareness that ICT may be changing fundamental human nature or threatening the nature of what it means to care.25,26 This can apply to understanding the concerns (fear of reduction of human contact, of deskilling, and of new dependence on machines) raised by participants on robot use in eldercare. These concerns could also restrain people from adopting an assistive robot.

It seems that at this stage, the cohort of older adults at the border of “fourth age”27 are not yet ready to adopt an assistive robot, an emerging technological product but at the same time laden with stigmatizing symbolism. This finding parallels that of Heart and Kalderon,20 showing that older adults are not yet ready to adopt health-related ICT, mainly due to a lack of perceived need and a lack of interest. A new cohort of younger older adults (baby boomers) would probably present a higher level of acceptance of this kind of robotic device. However, it is important to destigmatize images of assistive robots to facilitate their acceptance.

Universal design aimed at increasing the market for and production of products usable by everyone (to the greatest extent possible)28 might help to destigmatize assistive devices. Blackman5 suggests that a care robot should target a wider market by integrating some functionalities other than personal care, such as remote surveillance of an unoccupied home. According to one’s needs, it could integrate add-on modules on demand. He proposes an “incremental technology development” approach to develop and launch a new technological product into the market. For example, an affordable assistive robot would be a robot with a mobile platform of a robot vacuum cleaner, combined with a conventional telecare alarm, touch screen, and social networking sites. This type of product could achieve better market penetration. Universal design provides a single solution accommodating all people, and as a consequence older users would perceive themselves less as persons with special needs.

Limits of the study

There were some limits in the study, which might constrain its generalizability. First, this study had a small sample size of a specific population composed of a majority of French women with high educational level. Second, although the experiment lasted 4 weeks, subjects only used the robot for 1 hour every week in a lab environment. In future studies, researchers could address these issues by conducting these kinds of studies in the home environment of participants with larger sample sizes and a sex-balanced samples.

Conclusion

Even though the study had some limits, our original approach (involving mixed methods) investigating human–robot interaction over a 1-month period brought forth interesting data, allowing us to gain in-depth knowledge about older adults’ willingness to adopt an assistive robot and about the barriers to robot-acceptance. Our findings led to some suggestions for robot designers to make an assistive robot more attractive and acceptable for older people.

Acknowledgments

This work was supported by the French National Research Agency (ANR), the National Solidarity Fund for Autonomy (CNSA), and the General Directorate for Armament (DGA) through the TECSAN program (ANR-09-TECS-012). We also thank Fanny Lorentz and Véronique Ferracci for their help in the management of projects.

Disclosure

The authors report no conflicts of interest in this work.

References

Smarr CA, Prakash A, Beer JM, Mitzner TL, Kemp CC, Rogers WA. Older adults’ preferences for and acceptance of robot assistance for everyday living tasks. Poster presented at: 56th Annual Meeting of Human Factors and Ergonomics Society; October 22–26, 2012; Boston, MA. | ||

Broadbent E, Stafford R, MacDonald B. Acceptance of healthcare robots for the older population: review and future directions. Int J Soc Robot. 2009;1(4):319–330. | ||

Seelye AM, Wild KV, Larimer N, Maxwell S, Kearns P, Kaye JA. Reactions to a remote-controlled video-communication robot in seniors’ homes: a pilot study of feasibility and acceptance. Telemed J E Health. 2012;18(10):755–759. | ||

Ezer N, Fisk AD, Rogers WA. More than a servant: self-reported willingness of younger and older adults to having a robot perform interactive and critical tasks in the home. Poster presented at: 53rd Annual Meeting of Human Factors and Ergonomics Society; October 19–23, 2009; San Antonio, TX. | ||

Blackman T. Care robots for the supermarket shelf: a product gap in assistive technologies. Ageing Soc. 2013;1(1):1–19. | ||

Broekens J, Heerink M, Rosendal H. Assistive social robots in elderly care: a review. Gerontechnology. 2009;8(2):94–103. | ||

Kuo I, Rabindran J, Broadbent E, et al. Age and gender factors in user acceptance of healthcare robots. Poster presented at: 18th IEEE International Symposium on Robot and Human Interactive Communication (RO-MAN); September 27–October 2, 2009; Toyama, Japan. | ||

Broadbent E, Kuo IH, Lee YI, et al. Attitudes and reactions to a healthcare robot. Telemed J E Health. 2010;16(5):608–613. | ||

Stafford RQ, MacDonald BA, Jayawardena C, Wegner DM, Broadbent E. Does the robot have a mind? Mind perception and attitudes towards robots predict use of an eldercare robot. Int J Soc Robot. 2014;6(1):17–32. | ||

Heerink M, Kröse B, Evers V, Wielinga B. Assessing acceptance of assistive social agent technology by older adults: the Almere model. Int J Soc Robot. 2010;2(4):361–375. | ||

Jay GM, Willis SL. Influence of direct computer experience on older adults’ attitudes toward computers. J Gerontol. 1992;47(4):P250–P257. | ||

Petersen RC, Doody R, Kurz A, et al. Current concepts in mild cognitive impairment. Arch Neurol. 2001;58(12):1985–1992. | ||

Guba EG, Lincoln Y. Fourth Generation Evaluation. Newbury Park (CA): Sage; 1989. | ||

Crabtree BF, Miller WL. Research practice settings: a case study approach. In: Crabtree BF, Miller WL, editors. Doing Qualitative Research. Thousand Oaks (CA): Sage; 1999:71–88. | ||

Braun V, Clarke V. Using thematic analysis in psychology. Qual Res Psychol. 2006;3(2):77–101. | ||

Katzman R. Can late life social or leisure activities delay the onset of dementia? J Am Geriatr Soc. 1995;43(5):583–584. | ||

Mahmood A, Yamamoto T, Lee M, Steggell C. Perceptions and use of gerotechnology: implications for aging in place. J Hous Elderly. 2008;22(1–2):104–126. | ||

Morris A, Goodman J, Brading H. Internet use and non-use: views of older users. Univers Access Inf Soc. 2007;6(1):43–57. | ||

Selwyn N, Gorard S, Furlong J, Madden L. Older adults’ use of information and communications technology in everyday life. Ageing Soc. 2003;23(5):561–582. | ||

Heart T, Kalderon E. Older adults: Are they ready to adopt health-related ICT? Int J Med Inform. 2013;82(11):e209–e231. | ||

Gucher C. Technologies du “Bien vieillir et du lien social”: questions d’acceptabilité, enjeux de sens et de continuité de l’existence-la canne et le brise-vitre [“Good ageing and social links” technology: questions of acceptability, direction and continued existence – walking stick and emergency glass-breaking hammer]. Gerontol Soc. 2012;2(141):27–39. French. | ||

Porter EJ, Benson JJ, Matsuda S. Older homebound women: negotiating reliance on a cane or walker. Qual Health Res. 2011;21(4):534–548. | ||

Parette P, Scherer M. Assistive technology use and stigma. Educ Train Dev Disabil. 2004;39(3):217–226. | ||

Neven L. ‘But obviously not for me’: robots, laboratories and the defiant identity of elder test users. Sociol Health Illn. 2010;32(2):335–347. | ||

McLean A. Ethical frontiers of ICT and older users: cultural, pragmatic and ethical issues. Ethics Inf Technol. 2011;13(4):313–326. | ||

Ganyo M, Dunn M, Hope T. Ethical issues in the use of fall detectors. Ageing Soc. 2011;31(8):1350–1367. | ||

Blanchard-Fields F, Kalinauskas AS. Challenges for the current status of adult development theories: a new century of progress. In: Smith MC, Defrates-Densch N, editors. Handbook of Research on Adult Learning and Development. New York: Routledge; 2009:3–33. | ||

Mace R. Universal design: Barrier free environments for everyone. Designers West. 1985;33(1):147–152. |

© 2014 The Author(s). This work is published and licensed by Dove Medical Press Limited. The

full terms of this license are available at https://www.dovepress.com/terms

and incorporate the Creative Commons Attribution

- Non Commercial (unported, 3.0) License.

By accessing the work you hereby accept the Terms. Non-commercial uses of the work are permitted

without any further permission from Dove Medical Press Limited, provided the work is properly

attributed. For permission for commercial use of this work, please see paragraphs 4.2 and 5 of our Terms.

© 2014 The Author(s). This work is published and licensed by Dove Medical Press Limited. The

full terms of this license are available at https://www.dovepress.com/terms

and incorporate the Creative Commons Attribution

- Non Commercial (unported, 3.0) License.

By accessing the work you hereby accept the Terms. Non-commercial uses of the work are permitted

without any further permission from Dove Medical Press Limited, provided the work is properly

attributed. For permission for commercial use of this work, please see paragraphs 4.2 and 5 of our Terms.