Back to Journals » Clinical Audit » Volume 6

Evaluating the quality of in-hospital stroke care, using an opportunity-based composite measure: a multilevel approach

Authors Ribera A, Abilleira S , Permanyer-Miralda G, Tresserras R, Pons J, Gallofré M

Received 23 January 2014

Accepted for publication 7 March 2014

Published 26 August 2014 Volume 2014:6 Pages 11—20

DOI https://doi.org/10.2147/CA.S61243

Checked for plagiarism Yes

Review by Single anonymous peer review

Peer reviewer comments 2

Aida Ribera,1–3 Sònia Abilleira,1,3 Gaietà Permanyer-Miralda,2,3 Ricard Tresserras,4 Joan MV Pons,1,3 Miquel Gallofré3,4

1Stroke Program, Agency for Health Quality and Assessment of Catalonia, Barcelona, Spain; 2Cardiovascular Epidemiology Unit, Hospital Vall d'Hebron, Barcelona, Spain; 3CIBER Epidemiología y Salud Pública, Madrid, Spain; 4Stroke Program, Department of Health, Autonomous Government of Catalonia, Barcelona, Spain

Background: Development of process-based quality measures has been of increasing interest. We aimed to construct a composite quality measure for acute stroke care and to evaluate its performance at the hospital level.

Methods: We used data from the Stroke Audit 2007, based on retrospective review of medical charts linked to the Mortality Register 2007–2008 (Catalonia, Spain). Eight quality measures were selected on the basis of clinical relevance, scientific evidence, and relationship to mortality: screening of dysphagia, initiation of antiplatelets at less than 48 hours (if ischemic stroke), early mobilization, assessment of rehabilitation, management of hypertension, management of dyslipidemia, anticoagulation in case of atrial fibrillation (if ischemic stroke), and antithrombotics on discharge (ischemic strokes only). We constructed an opportunity-based composite quality measure of eight individual measures and correlated noncompliance with the individual and composite quality measures with risk-standardized 30-day mortality at the hospital level. Noncompliance with the opportunity-based composite measure was calculated as the sum of the total instances that a required individual measure was not performed or not documented. Multilevel linear regression analyses were conducted to assess the variability of noncompliance at the hospital level and to what extent variability could be explained by differences in hospital characteristics.

Results: We analyzed data from 1,686 patients (representing 9,334 opportunities for compliance with the composite quality measure) admitted to 47 acute hospitals. Noncompliance with the composite was 32.7% (95% confidence interval [CI], 31.5%–33.9%), and the correlation with hospital risk-adjusted 30-day mortality was 0.24 (P=0.1). Using multilevel logistic modeling, hospitals with an intermediate number of annual stroke admissions (150–350 versus <150) and hospitals with an ongoing stroke registry showed better compliances (odds ratio for noncompliance, 0.59 [95% CI, 0.4–0.87] and 0.5 [95% CI, 0.35–0.73], respectively). Individual factors explained 3.9% of hospital variability, whereas structural variables explained 49.4% of hospital variability.

Conclusion: An opportunity-based composite may be useful to globally assess quality of stroke care across providers, even though correlations with mortality are weak. In addition, it offers new insights about the relationship between hospitals' structural resources and quality.

Keywords: acute stroke care, composite quality measure, multilevel analysis

Introduction

Development and analysis of process-based quality measures (QMs) has been of increasing interest. Although information to prioritize specific quality improvement efforts must necessarily be based on individual QMs (iQMs), development of a composite QM has some practical advantages and is conceptually sound. The use of a composite should reduce information burden and make provider assessment more comprehensive than iQMs for assigning providers a place on a scale of better-to-worse performance.1 A composite might also capture more information about quality of care, leaving a smaller residual of unmeasured care.2

In 2007, we launched the Second Stroke Audit in Catalonia (Spain), which assessed quality of in-hospital stroke care in 47 acute hospitals on the basis of compliance with a series of guideline-based iQMs. Clinical data from the audit were linked to data from the Mortality Register of Catalonia (2007 and 2008). Audits are part of a quality improvement initiative launched in 2004 by the Stroke Program, a section of the Master Plan for Diseases of the Circulatory System of the Catalan Department of Health. This quality improvement strategy is based on an audit and feedback scheme that was applied after the development and publication of Clinical Practice Guidelines on stroke in 2005.

We have previously reported the results of the Second Stroke Audit,3 in which we found an association between a few iQMs and 30-day and/or 12-month mortality. The aim of the present study was to evaluate performance of a composite QM for in-hospital stroke care by determining its effect on risk-adjusted mortality at 30 days at the hospital level and assessing the variability of noncompliance with the composite QM across hospitals, using a multilevel statistical modeling approach.

Methods

The present analysis is based on patient- and hospital-level data from the Stroke Audit 2007.4

Individual QMs of stroke and development of a composite QM

The process for selecting the most relevant process of care QMs is detailed elsewhere.4 Briefly, members of the guidelines board and the Standing Commission of the Stroke Programme listed 43 clinically and/or scientifically relevant recommendations that represented quality standards of stroke care. For the development of a composite, we selected a core of eight iQMs out of the initial 43 individual QMs that showed an association with 30-day mortality at the patient level:3 screening of dysphagia performed within 24–48 hours poststroke, initiation of antiplatelets after less than 48 hours (if ischemic stroke), early mobilization within 48 hours after stroke onset, assessment of rehabilitation needs within the first 2 days poststroke, management of hypertension (patients who receive specific antihypertensive medication by discharge, irrespective of their premorbid blood pressure), management of dyslipidemia (patients with ischemic strokes who receive proper assessment of their lipid profile during admission and prescription of statins when necessary on discharge), anticoagulation in case of atrial fibrillation (if ischemic stroke), and antithrombotics on discharge (ischemic strokes only). For all measures, we used data of stroke patients alive more than 72 hours poststroke to minimize the effect of patients with very poor prognosis at onset on clinical outcomes who might, thus, die before the indicated process of care could be performed or were potential candidates to receive only palliative care, regardless of them being eligible for the evaluated iQMs. To combine iQMs into a composite score, we chose the opportunity scoring method because it increases power and avoids the need for assigning weights to the iQMs.1 Noncompliance with the opportunity-based composite was calculated as the sum of the total instances that a required iQM was not performed or not documented (ie, incorrect care given) divided by the total number of eligible opportunities (the number of required iQMs). Note that the opportunity-based approach is measuring the “care gaps”, not adherence, which is not the same way it is usually reported in quality assessments. The opportunity-based composite can be calculated at the hospital level by summing up all eligible opportunities across all patients of a given hospital.5

Because of the retrospective nature of the design, obtaining an informed consent was not necessary according to local law. The Stroke Audit protocol was approved by the Research Commission of the Catalan Agency for Health Technology Assessment and Research after the assessment of confidentiality and ethical aspects.

Statistical analyses

Variables are presented as percentages or mean (standard deviation) when appropriate. Noncompliance, with the composite according to hospitals’ characteristics, was assessed using Student’s t-test or one-way analysis of variance when appropriate. At the hospital level, a normal distribution of noncompliance with the composite was assumed by inspection of standardized normal probability plots.

Correlations between iQMs, the composite, and risk-adjusted mortality at 30 days were assessed with Pearson correlation coefficients.

Estimation of risk-adjusted 30-day mortality rates

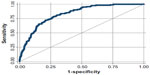

All-cause mortality at 30 days after stroke onset was obtained from the Mortality Register of Catalonia (January 1, 2007–December 31, 2008). Risk-adjusted 30-day mortality rates were calculated for each hospital as observed mortality in hospital Y/expected mortality in hospital Y, where expected mortality was estimated by fitting logistic regression models using generalized estimating equations to adjust for interhospital variability. The models included age, sex, cardiovascular risk factors, history of myocardial infarction or angina, atrial fibrillation, heart failure, history of previous stroke or transient ischemic attack, previous independence for activities of daily living, stroke severity (based on presence of speech disturbance, motor impairment, and ability to walk), and stroke subtype (ischemic or hemorrhagic). The model for prediction of expected 30-day mortality rate showed good prediction and calibration properties (C-statistic =0.83; Hosmer-Lemeshow test P-value =0.679). Predictors of mortality at 30 days poststroke and area under the receiver operating characteristic curve are available in additional files (Table S1 and Figure S1).

Analysis of the association of patient and hospital characteristics with noncompliance with the opportunity-based composite, using multilevel logistic analysis

Using multilevel logistic analysis with opportunity-based data, we investigated the extent to which interhospital variability of noncompliance with the composite could be explained by patients or hospital characteristics. Each opportunity (each iQM for which the patient was eligible) contributed an observation, and the outcome was a dichotomous variable indicating whether or not the opportunity was fulfilled. For example, if a patient was eligible for eight iQMs and received five, the patient would contribute with eight observations in the data set, of which three would equal one (indicating noncompliance) and five would equal zero (indicating compliance). Thus, there are two levels of clustering in the data: the patient level and the hospital level.

We performed the analysis in three steps. First, we built an “empty” model that only included a random intercept and an iQM indicator variable (which equals 1 in the case of noncompliance with the indicated iQM to adjust for the iQM opportunity mix) to measure the interhospital variability of noncompliance with the composite. Second, we included patients’ characteristics to investigate the extent to which differences regarding noncompliance with the composite at the hospital level were explained by characteristics of patients attended at each hospital. Finally, we added the hospital variables to investigate whether hospital characteristics explained the interhospital variability regarding noncompliance with the composite. We estimated measures of association with noncompliance with the composite for both patient and hospital characteristics.

Selection of variables was based on the strength of their bivariate associations with noncompliance with the composite. Candidate individual variables were age, sex, diabetes, dyslipidemia, hypertension, previous acute myocardial infarction/angina, previous stroke/transient ischemic attack, atrial fibrillation, prestroke independence for activities of daily living, speech disturbance, motor impairment, ability to walk, and ischemic stroke. Candidate hospital variables were number of beds, annual number of stroke admissions, academic/teaching hospital, stroke unit, 24 hours/365 days on-site/on-call neurologist, on-site intravenous thrombolysis, in-hospital rehabilitation services, in-hospital patient education, and availability of clinician-led stroke registries. All candidate variables and first-degree interactions were tested. In the final model, we only included statistically significant variables because model performance did not improve and indicators of hospital-level variability did not change when developing more-saturated models. Multilevel logistic regression models were estimated with the xtmlogit procedure in STATA 11.1.

Measures of interhospital variability

To measure the change of interhospital variability in noncompliance with the composite at each step, we calculated the percentage change of hospital variability of the more complex model compared with the “empty” model.

To measure the magnitude of interhospital variability, we estimated the median odds ratio (MOR) for each model. The MOR is defined as the median value of the OR between the hospital at higher risk and the hospital at lower risk when randomly picking out two hospitals.6 In this study, the MOR shows the extent to which the probability of noncompliance with the composite at each opportunity is determined by the hospital. If the MOR equals one, there would be no differences between hospitals. If the MOR is large, hospital differences would still be relevant to understand variations in noncompliance with the composite, thus leaving a large amount of interhospital variability unexplained.

To take interhospital variability into account when interpreting associations between hospitals’ characteristics and noncompliance with the composite, we estimated the 80% interval OR (IOR-80). The IOR-80 is the middle 80% interval for ORs between two opportunities with different hospital-level covariate patterns. The interval is narrow if interhospital variability is small, and it is wide if interhospital variability is large. If the interval contains 1, interhospital variability is large in comparison with the effect of hospital level characteristics. If the interval does not contain 1, the effect of hospital level characteristics is large in comparison with the unexplained interhospital variability. Methods and formulas to compute indexes of interhospital variability were obtained from Merlo et al.6

Results

Among 1,761 patients included in the 47 participating hospitals, 1,697 survived beyond the first 72 hours poststroke. For 11 patients, none of the selected iQMs was indicated and they were excluded. Of the remaining 1,686 patients, 171 (10.2%) died within the first 30 days after stroke. Baseline characteristics of patients can be seen in Table 1.

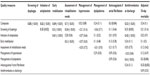

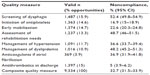

There were 9,334 opportunities for compliance with iQMs, and their distribution across the iQMs is shown in Table 2. The average number of opportunities per patient (minimum–maximum) and per hospital (minimum–maximum) were 5.5 (1–8) and 198.6 (65–368). Noncompliance with the composite was 32.7% (95% CI, 31.5%–33.9%).

| Table 2 Number and proportion of opportunities for compliance with each individual quality measure and percentage of noncompliance |

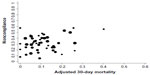

When QMs were aggregated at the hospital level, the correlation between noncompliance with the composite and risk-adjusted mortality at 30 days was 0.24 and was not significant (Table 3 and Figure 1). The only iQMs that correlated with 30-day mortality were management of dyslipidemia and anticoagulants in the case of atrial fibrillation. The composite was highly correlated with screening of dysphagia, initiation of antiplatelets, early mobilization, assessment of rehabilitation needs, and antithrombotics at discharge. Correlation was very low with management of hypertension.

| Figure 1 Scatter plot of noncompliance, with the composite quality measure against adjusted 30-day mortality. |

Before adjustment, hospital characteristics that showed a significant association with noncompliance with the composite were bed size (with hospitals larger than 500 beds showing worse compliance) and annual number of stroke admissions (hospitals with 350 or more stroke admissions/year had worse compliance and hospitals with 150–350 stroke admissions/year had better compliance with the composite; Table 4). After adjustment, the individual variables significantly associated with noncompliance with the composite were age (J-shaped relationship) and being unable to walk, whereas hypertension and prestroke independence for activities of daily living were significantly associated with a better compliance (Table 5) (See Table S2 in Supplementary material for information on missing data). At the hospital level, hospital size (intermediate number of stroke admissions) and having a clinician-led registry of stroke care activity were significantly associated with better compliance with the composite. Interhospital variability remained high after adjustment (MOR, 1.63; 95% CI, 1.02–5.1), although it was significantly reduced when hospital level variables were added to the model (change, 49.4%). Figure 2 graphically shows the effect of the number of stroke admissions and the availability of stroke registries, taking sample size (number of opportunities in each hospital) into account. Although there is a high variability among hospitals, outliers for better performance of the composite QM (lower noncompliance) were hospitals with an intermediate number of stroke admissions (triangles in Figure 2A) and hospitals with a stroke registry (triangles in Figure 2B).

| Table 4 Noncompliance with the composite, aggregated at the hospital level, according to hospital characteristics |

Discussion

According to recent developments in quality metrics, we developed an opportunity-based composite QM. We aimed to evaluate performance of the composite QM at the hospital level by examining its relationship to risk-adjusted mortality at 30 days and by assessing the variability of noncompliance with the composite across hospitals, using a multilevel approach. The present analysis suggests that a composite based on opportunities of performing 8 iQMs selected on the basis of adequate evidence and their association with mortality might be a useful tool for a global quality assessment of health providers. In addition, it gives new insights about the relationship between hospitals’ structural resources and quality of stroke care.

Although major advances in the development of composites have been made in the context of heart disease,7–11 the conceptual framework and practical recommendations developed to date are broad enough to be applicable to other health conditions. To our knowledge, development and validation of a composite for in-hospital stroke care has only been partially assessed.12,13

Most studies analyzing the relationship between quality of care and outcomes at the hospital level found only weak14,15 to moderate associations,8,10,11,16 whereas others found no association.17 In our data set, limited to 43 participating hospitals, a significance level of 0.05 may be too strict, and thus, according to the magnitude of the observed correlation (0.24) and the shape of the scatter plot of noncompliance against adjusted mortality (Figure 1), we came to the conclusion that a correlation between noncompliance with the composite aggregated at the hospital level and risk-adjusted 30-day mortality is plausible, although it is weak. A weak association is also found when analyzing iQMs, with the exception of anticoagulants in the case of atrial fibrillation and management of dyslipidemia, which showed moderate correlations. If finding significant associations between quality of care and outcome at the patient level is a difficult task, demonstration of such associations when data are aggregated at the hospital level is even more difficult. This might be because of the sample size, which is much smaller when analyzing hospital data instead of patient’s data. Moreover, in real-life practice, the expected relationship between process of stroke care and outcome is prone to being influenced by confounders. We would expect stronger correlations when exploring other outcomes more closely related to hospital care, such as functional outcome or 30-day readmission rate.7

Our selection of iQMs to compute the composite might be arguable.18 The purpose of our composite was to summarize performance on several process measures that are implemented to improve outcome. Thus, we combined iQMs that were based on scientific evidence and had previously shown associations with mortality at the patient level.3 As a consequence, it seems plausible that improvement in each iQM would correspond to an improvement in outcome in the shorter or longer term. A composite based on iQMs already linked to outcome would facilitate a global assessment of quality to then focus on specific interventions to promote improvement. We included neither intravenous thrombolysis nor stroke unit care as individual QMs in our study. The reason is that we have a regionalized system of acute stroke care that establishes clear hospital categories, with Primary and Comprehensive Stroke Centers being responsible for intravenous thrombolysis. Similarly, stroke units are available at all Primary Stroke Centers; in contrast, only some community hospitals have stroke units available. Therefore, instead of including an individual QM such as “stroke unit care”, which in fact might be considered a composite QM itself, we tried and assessed specific interventions typically provided at such a unit, regardless of the presence/absence of a so-called stroke unit. It could also be argued that our composite fails to comply with the criterion of internal consistency because intercorrelations between some iQMs are low. However, although internal consistency is important for instruments developed in the field of psychometrics, it is less relevant if the goal of the measure is to combine multiple distinct dimensions that separately influence quality through a causal relationship (ie, compliance with each iQM promotes a better quality).19

As happens with iQMs,4 noncompliance with the composite is highly variable across hospitals. After adjustment, we found some structural features that would favor greater compliance. Hospitals with an intermediate number of stroke admissions and those with a clinician-led registry of in-hospital stroke activity showed better performances. Remarkably, only 3.9% of the interhospital variability was explained by the case mix. The addition of the two structural variables had a large effect on the reduction of variability among hospitals, although leaving a large amount of residual variability unexplained. This might indicate that quality of care depends, to a great extent, on factors that are not systematically measured or that are difficult to measure; that is, organizational differences among providers, different attitudes, preferences or knowledge among professionals, and so on.

One might wonder through what mechanism structural variables may influence variability. With regard to the effects of an intermediate number of admissions, it seems reasonable that although there is an acknowledged tendency for improvement in quality with increasing hospital volumes,12 very large volumes could imply greater organizational problems. It is perhaps more difficult to ascertain why running a clinical registry can be associated with better performance. Two mechanisms might be operative: first, to comply with the requirements fulfilling a registry could have an educational effect on clinical behavior, leading practitioners toward a better compliance with recommendations via a recall influence. Furthermore, even if running a registry did not have a direct influence on variability, it might be a marker of more organized care in the corresponding hospitals, thus representing an indirect measurement of unmeasured features.2 Figure 3 provides a plausible interpretation of our multilevel approach to explain the sources of variability and the role of certain structural variables, such as running a stroke registry, as a marker variable for unmeasured care.

| Figure 3 A theoretical proposal for sources of interhospital variability of the quality of stroke care. |

The retrospective nature of this study might limit the validity of the results. Using clinical records as the data source makes correct definition of eligible patients for each iQM problematic. For instance, determining a patient’s eligibility for the QM “early mobilization” through retrospective review of medical records is challenging, particularly in the absence of a positive documentation, because there might be relevant information missing or not clearly stated (ie, orthostatic hypotension contraindicating mobilization), thus making it difficult to interpret the sometimes subjective decision-making sequence. In addition, performance of our composite should ideally be tested as a predictor of other outcome measures, such as poststroke functional status or readmission rate in an independent sample to determine whether the composite is valid to endeavor global assessment needs. In addition, performance of the opportunity-based composite measure should be compared with other methodological approaches, such as regression-based, latent-trait or any-or-none composite measures.1,18 Final actions of this quality-focused research should test to what extent there exists a causal relationship between improvement in quality and improvement in outcome, something that is beyond this study, although it remains necessary.20

Conclusion

One of the objectives, if not the main one, of auditing real-life health care through compliance with guideline-based recommendations is to reduce variability in clinical practice. Thus, analyzing the sources of variability is relevant, and a composite quality measure makes this exercise more feasible. The opportunity-based composite measure needs to be validated, but it provides a simple way of summarizing performance of selected accountability measures,21 and we suggest that a global assessment might promote, in addition better compliance with specific evidence-based processes, quality-enhancing attitudes and behaviors.

Disclosure

The authors report no conflicts of interest in this work.

References

Peterson ED, DeLong ER, Masoudi FA, et al. ACCF/AHA 2010 Position Statement on Composite Measures for Healthcare Performance Assessment: a report of American College of Cardiology Foundation/American Heart Association Task Force on Performance Measures (Writing Committee to Develop a Position Statement on Composite Measures). J Am Coll Cardiol. 2010;55(16):1755–1766. | |

Werner RM, Bradlow ET, Asch DA. Does hospital performance on process measures directly measure high quality care or is it a marker of unmeasured care? Health Serv Res. 2008;43(5 Pt 1):1464–1484. PMID:22568614. | |

Abilleira S, Ribera A, Permanyer-Miralda G, Tresserras R, Gallofré M. Noncompliance with certain quality indicators is associated with risk-adjusted mortality after stroke. Stroke. 2012;43(4):1094–1100. | |

Abilleira S, Gallofré M, Ribera A, Sánchez E, Tresserras R. Quality of in-hospital stroke care according to evidence-based performance measures: results from the first audit of stroke, Catalonia, Spain. Stroke. 2009;40(4):1433–1438. | |

Bonow RO, Bennett S, Casey DE Jr, et al; Heart Failure Society of America. ACC/AHA Clinical Performance Measures for Adults with Chronic Heart Failure: a report of the American College of Cardiology/American Heart Association Task Force on Performance Measures (Writing Committee to Develop Heart Failure Clinical Performance Measures): endorsed by the Heart Failure Society of America. Circulation. 2005;112(12):1853–1887. | |

Merlo J, Chaix B, Ohlsson H, et al. A brief conceptual tutorial of multilevel analysis in social epidemiology: using measures of clustering in multilevel logistic regression to investigate contextual phenomena. J Epidemiol Community Health. 2006;60(4):290–297. | |

Hernandez AF, Fonarow GC, Liang L, Heidenreich PA, Yancy C, Peterson ED. The need for multiple measures of hospital quality: results from the Get with the Guidelines-Heart Failure Registry of the American Heart Association. Circulation. 2011;124(6):712–719. | |

Bradley EH, Herrin J, Elbel B, et al. Hospital quality for acute myocardial infarction: correlation among process measures and relationship with short-term mortality. JAMA. 2006;296(1):72–78. | |

Couralet M, Guérin S, Le Vaillant M, Loirat P, Minvielle E. Constructing a composite quality score for the care of acute myocardial infarction patients at discharge: impact on hospital ranking. Med Care. 2011;49(6):569–576. | |

Eapen ZJ, Fonarow GC, Dai D, et al; Get With The Guidelines Steering Committee and Hospitals. Comparison of composite measure methodologies for rewarding quality of care: an analysis from the American Heart Association’s Get With The Guidelines program. Circ Cardiovasc Qual Outcomes. 2011;4(6):610–618. | |

Peterson ED, Roe MT, Mulgund J, et al. Association between hospital process performance and outcomes among patients with acute coronary syndromes. JAMA. 2006;295(16):1912–1920. | |

Reeves MJ, Gargano J, Maier KS, et al. Patient-level and hospital-level determinants of the quality of acute stroke care: a multilevel modeling approach. Stroke. 2010;41(12):2924–2931. | |

Ross JS, Arling G, Ofner S, et al. Correlation of inpatient and outpatient measures of stroke care quality within veterans health administration hospitals. Stroke. 2011;42(8):2269–2275. | |

McNaughton H, McPherson K, Taylor W, Weatherall M. Relationship between process and outcome in stroke care. Stroke. 2003;34(3):713–717. | |

Werner RM, Bradlow ET. Relationship between Medicare’s hospital compare performance measures and mortality rates. JAMA. 2006; 296(22):2694–2702. | |

Stukel TA, Alter DA, Schull MJ, Ko DT, Li P. Association between hospital cardiac management and outcomes for acute myocardial infarction patients. Med Care. 2010;48(2):157–165. | |

Patterson ME, Hernandez AF, Hammill BG, et al. Process of care performance measures and long-term outcomes in patients hospitalized with heart failure. Med Care. 2010;48(3):210–216. | |

Cadilhac D, Kilkenny M, Churilov L, Harris D, Lalor E. Identification of a reliable subset of process indicators for clinical audit in stroke care: an example from Australia. Clinical Audit. 2010;2:67–77. | |

MacKenzie SB, Podsakoff PM, Jarvis CB. The problem of measurement model misspecification in behavioral and organizational research and some recommended solutions. J Appl Psychol. 2005;90(4):710–730. | |

Ryan AM, Doran T. The effect of improving processes of care on patient outcomes: evidence from the United Kingdom’s quality and outcomes framework. Med Care. 2012;50(3):191–199. | |

Chassin MR, Loeb JM, Schmaltz SP, Wachter RM. Accountability measures – using measurement to promote quality improvement. N Engl J Med. 2010;363(7):683–688. |

Supplementary materials

| Table S1 Estimated odds ratios for 30-day mortality from the model used for prediction of expected mortality (valid n=1,197) |

| Figure S1 Area under the receiver operating characteristic curve (ROC) for the prediction of 30-day mortality. |

© 2014 The Author(s). This work is published and licensed by Dove Medical Press Limited. The full terms of this license are available at https://www.dovepress.com/terms.php and incorporate the Creative Commons Attribution - Non Commercial (unported, v3.0) License.

By accessing the work you hereby accept the Terms. Non-commercial uses of the work are permitted without any further permission from Dove Medical Press Limited, provided the work is properly attributed. For permission for commercial use of this work, please see paragraphs 4.2 and 5 of our Terms.

© 2014 The Author(s). This work is published and licensed by Dove Medical Press Limited. The full terms of this license are available at https://www.dovepress.com/terms.php and incorporate the Creative Commons Attribution - Non Commercial (unported, v3.0) License.

By accessing the work you hereby accept the Terms. Non-commercial uses of the work are permitted without any further permission from Dove Medical Press Limited, provided the work is properly attributed. For permission for commercial use of this work, please see paragraphs 4.2 and 5 of our Terms.